Orchestration Economics: From Disruption to Discontinuity (Chapter 1)

The software industry’s 2025 optimism masked structural transformation. When it surfaced in early 2026, the market had no framework to interpret it.

This is the latest excerpt from AGNT: The Orchestration Economics Manifesto - An Investment Framework for the Agentic Era. Each Thursday, I explore a major theme of the Manifesto and unpack the frameworks, adding extra context with more recent developments. Note: The figures and sequential references are taken directly from the larger Manifesto.

The software industry entered 2024 in what looked like a renaissance. AI launches pushed SaaS valuations higher. ServiceTitan’s IPO succeeded. Salesforce announced Agentforce. Investors believed the next growth cycle had begun. Beneath the optimism, something else was happening.

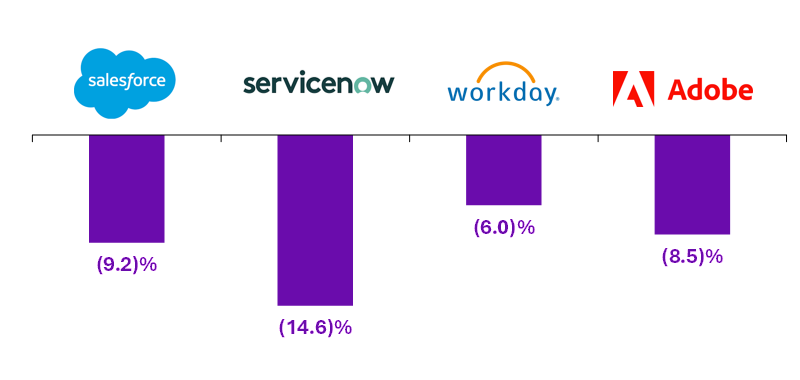

The software industry that began 2025 celebrating AI-driven growth ended the year confronting the narrative that AI-driven growth reflected in their top line mattered less than the perceived vulnerability to their fundamental business model. Over the course of early 2026, the surface cracked open. ServiceNow, Salesforce, Workday, and Adobe each lost between 6% and 15% of market capitalization in a single week of trading in February 2026.

That fallout continues. Each new release by Anthropic sends shudders through the markets. This week, its new financial plugins hit financial stocks. Meanwhile, Anthropic experienced 80x growth in Q1 2026, compared to its own 10x projection. That created a problem of compute constraint that suddenly seemed to be solved by a shocking deal with SpaceX-xAI to lease compute.

These events seem almost incomprehensible compared to everything the tech industry has experienced over the past 30 years. That was the primary motivation for publishing AGNT: The Orchestration Economics Manifesto, a framework for what is becoming the largest value dislocation within the S&P 500 in modern memory.

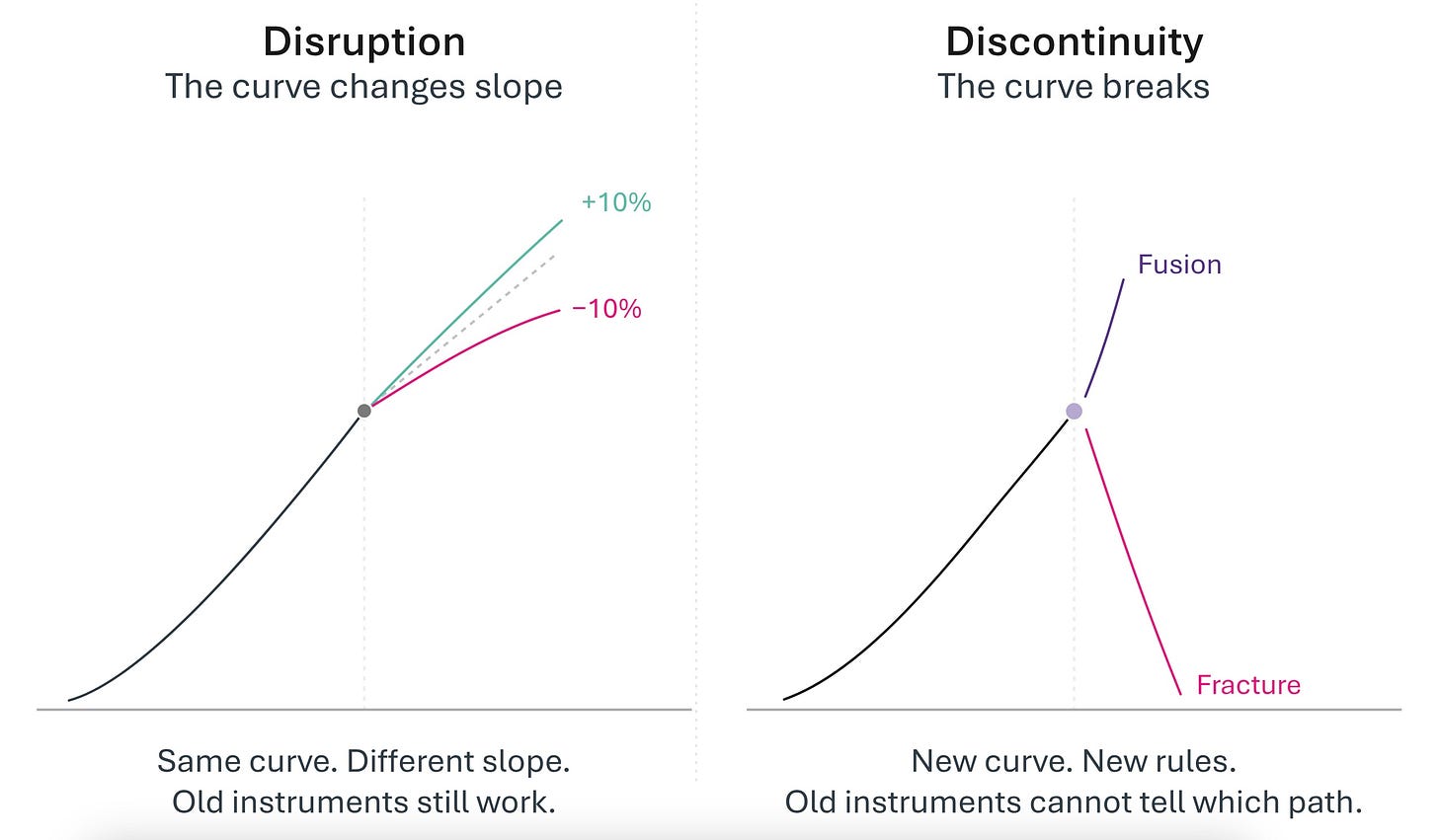

The transition into the Agentic Era is not a faster version of the software cycle we already know how to analyze. It is a discontinuity, a break in the operative logic by which work is organized, coordination is conducted, and economic power becomes durable. Disruption rearranges positions inside an existing order. A discontinuity alters the architecture itself.

This Manifesto is my attempt to make that new architecture legible, and to name where durable value will accrue in the era now opening.

Discontinuity is not Disruption

The era of generative and agentic AI is not disruption. It is discontinuity.

I know it is tempting to dismiss these as buzzwords or shallow consultant shorthand. Disruption. Discontinuity. Paradigm. Several decades of overuse have stretched and flattened such words, robbing them of meaning. But these words once signified very precise concepts that shaped how we understood technological change. That is why, when I began writing three years ago about generative and then agentic AI, I chose the word discontinuity. Not because it sounds dramatic. But because it is technically accurate, and the distinction between disruption and discontinuity is load-bearing for everything that follows. I believe we must reclaim that meaning to help navigate the present and guide us toward the future as it unfolds.

Disruption follows predictable patterns. New entrants target overlooked segments, gradually moving upmarket until incumbents fall. An existing curve steepens or flattens, but the curve continues. Leaders and investors can deploy existing tools to analyze the shift. This was the foundational insight of Clayton Christensen’s Innovator’s Dilemma. He first coined the phrase in 1995, one year after the Netscape browser was released, and his framing for thinking about technological disruption became a cornerstone for the next three decades of innovation and entrepreneurship.

Discontinuity goes beyond disruption. If disruption changes the slope of a curve, Discontinuity breaks the curve entirely. All previous strategies, tools, and historical lessons are ripped away. The break can lead to extraordinary value creation or extraordinary destruction.

When cloud computing disrupted on-premises software, it was a radical change in terms of pricing and technology. And yet, it was still CRM or payroll. People using it and installing it had reference points from their experiences. That is disruption.

Generative and agentic AI broke this pattern. Companies are not being handed a new CRM or payroll platform. Someone is giving them a magic wand and saying, “Here, it can do anything. Imagine what you can do with this”. Nothing in their experience has prepared them for the magic-wand scenario. They have no mental models.

So, they turn back to the old ones and stick the magic wand into the old tech stack, wishing for increased ROI that never materializes. Only 5% of enterprises reported any ROI from their initial generative AI projects last summer when MIT conducted a first-of-a-kind survey. As the study’s authors noted, the failure was not “driven by model quality or regulation but seems to be determined by approach”.

This is not surprising. Most investors and executives optimize for disruption because they can deploy familiar analytical frameworks, frameworks that project cash flows, calculate returns, and assess competitive dynamics using tools refined over decades. Discontinuity places them in a landscape where everything they once knew has lost its power to guide them.

French philosopher Gaston Bachelard described “epistemological rupture” as moments when progress requires abandoning old frameworks entirely, because accumulated knowledge has become an obstacle to understanding.

This is the core difficulty. The greatest obstacle to understanding discontinuity is not the absence of new information. It is the presence of old frameworks that interpret new evidence as confirmation of the regime that is ending.

This discomfort produces two failure modes. The first is paralysis: waiting for clarity that will arrive too late. The second is pattern-matching to previous cycles: applying frameworks that are no longer relevant and making catastrophic allocation decisions.

Frustration grows because the discontinuity has not been recognized. Meanwhile, technology's capabilities are rapidly advancing. Those who have recognized the discontinuity are somehow, almost inexplicably to others, transforming their businesses at unimaginable velocity.

This inability to build new mental models is a defining characteristic of discontinuity. Those unable to reorient themselves are at grave risk of being on the wrong side of a historic economic valuation shift.

The Human Challenge and the Scale of Time

This is about tech. But it’s a mistake to think that it’s just about tech. Such moments present a fundamental human challenge: People’s brains are not framed for discontinuity.

Navigating discontinuity requires an abstraction capability, the ability to see how all the pieces might eventually align and what consequences could be unleashed. Generative and agentic AI have the power to turn many non-technology companies into technology companies, an evolution many industries should start recognizing now.

To understand and adapt to discontinuity requires an understanding of the underlying components driving this phenomenon, a clear vision of how that impacts an existing business model, and a sophisticated view of the structures and assets that are realigning or emerging to enable something new.

This has been at the very core of my work for the past three years. And if there is one critical aspect that must be recognized to begin building the right mental model for this discontinuity, it is understanding the scale of time. The generative and agentic AI transformation is far more profound and sweeping than even many sophisticated technology leaders have truly grasped. That means it will play out over a much longer time frame than investors and entrepreneurs are accustomed to tolerating. This shift requires everyone across the innovation economy to fundamentally reset the way they think about building new companies, making investments, and defining the shape of markets. This moment demands a long-term view to map out which investments and technologies will make sense at which moment and why.

Amid the rubble of the dot-com bust, a certain defeatism set in. Maybe all the internet hype had really been just that: hype. But many Silicon Valley VCs began discussing a book written a decade earlier by Science Historian David Nye called “Electrifying America: Social Meanings of a New Technology”. The book traces the many ways in which electricity transformed the nation.

Cities installed electric streetlights, which expanded nightlife and led to a wave of new restaurants opening. To increase demand for electricity in homes, they designed appliances such as refrigerators and washing machines. No one could have envisioned most of these innovations when Thomas Edison made his first lightbulb in 1879. It took decades to put the infrastructure in place, raise investment capital, develop use cases, and refine business models.

The real transformation played out over a much longer timescale as all the pieces were put into place. Such was the case with digital transformation. Though it may have taken longer than initially predicted, today we are living in the digital world many envisioned in 2000, thanks to smartphones, 4G wireless, broadband, and a host of business-model innovations enabled by these waves of infrastructure.

When dealing with discontinuity, the scale of change is so massive that timelines are impossible to predict. So many pieces must align before exponential potential is unleashed.

Then, when it happens, the speed of change is startling.

This pattern repeats. A breakthrough technology appears. Euphoric predictions about sweeping changes are made. They fail to manifest. Doubt appears. Progress seems to stall. And then, in a blink, everything accelerates with little warning. We are in that phase now.

Anyone in the tech industry who experienced 2025 felt every extreme of this cycle. One moment, there was anxiety about an AI bubble. Then there was fear of overspending on infrastructure. Then the pendulum swung, and the market believed a niche product announcement crushed billions of dollars in market value for incumbent SaaS companies. Now, in reverse, we are all panicked that we are running out of compute and assessing whether compute constraints constitute or not a single point of failure (SPOF) for the AI labs.

OpenAI CEO Sam Altman captured the difficulty of reconciling these extremes when he said in August 2025: “Are we in a phase where investors as a whole are overexcited about AI? My opinion is yes. Is AI the most important thing to happen in a very long time? My opinion is also yes”.

The bubble skeptics are correct that many companies in this space will fail, just as many internet companies failed in 2000. They are wrong to conclude that the transition itself is speculative.

The Capability Curve Broke First

This discontinuity creates the foundation for something new: abundant intelligence, accessible through APIs, improving exponentially.

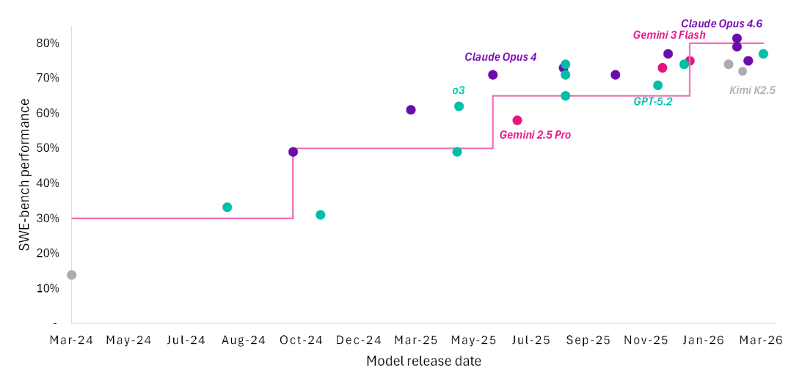

Companies that relied on linear improvement in AI capabilities, expecting gradual progress from models that could barely complete sentences to models that could write paragraphs, now suddenly faced step-function changes that break all projection curves.

This is not about doing existing things 10% better. When ChatGPT was first released in November 20226, it could describe a concept. By 2025, AI could architect a solution, write the code, build the interface, and deploy a functional application autonomously. By March 2026, AI could coordinate teams of sub-agents that divide complex professional work across domains, execute in parallel, sustain reasoning across a million tokens of context, operate computers through their own interfaces, and correct their own errors over hours of unsupervised operation. Three years. Three different worlds.

Importantly, this capability was proven and reproducible across multiple vendors. It was not one model, one company, or one approach. Frontier performance converged: multiple models achieve 80% on SWE-Bench (Software Engineering Benchmark), which evaluates large language models by testing their performance on real-world software engineering tasks. Multiple coding tools are capturing billions in revenue. The leaderboard positions for the latest frontier models change from day to day, week to week. The progression is accelerating in a way fundamentally different from previous AI winters.

The gap between capability announcement and production deployment has been compressed from years to months. GPT-4’s initial capabilities were rolled out to a limited set of users over several months in 2023 with tight restrictions, and then gradually expanded to broader availability over the next year. In sharp contrast, when Google released Gemini 3 Pro in November 2025, it was available globally and already baked into many of its most widely used products. What once required a year of cautious rollout now ships in a morning.

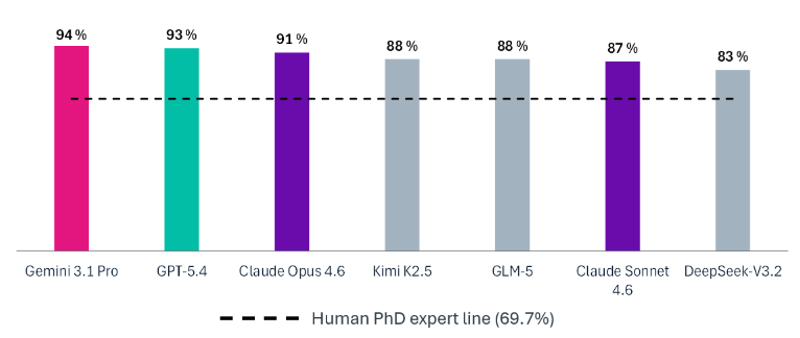

Models are reaching PhD-level reasoning as a foundational capability. Not as a research artifact, but as a commercially available infrastructure.

Then the Substrate Commoditized

As AI capabilities advanced rapidly, a group of researchers s in late 2023 proposed the graduate-level Google-Proof Q&A (GPQA) benchmark, based on 448 PhD-level questions from multiple disciplines. A more rigorous variation based on a subset of 198 questions emerged, known as GPQA Diamond, where human experts scored 69.7%24. The first commercially available model did not surpass that human threshold until December 2024. Eighteen months later, multiple models from competing laboratories exceeded 90%.

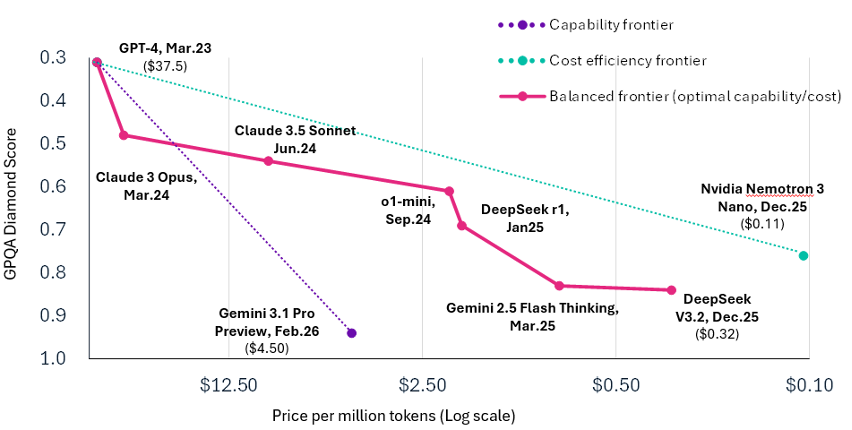

Meanwhile, the cost per query had fallen to fractions of a cent. Peer-reviewed research from Epoch AI found that the price to achieve a given level of frontier performance was declining by a factor of 5-10x per year. Roughly a third of that decline was attributable to algorithmic efficiency alone.

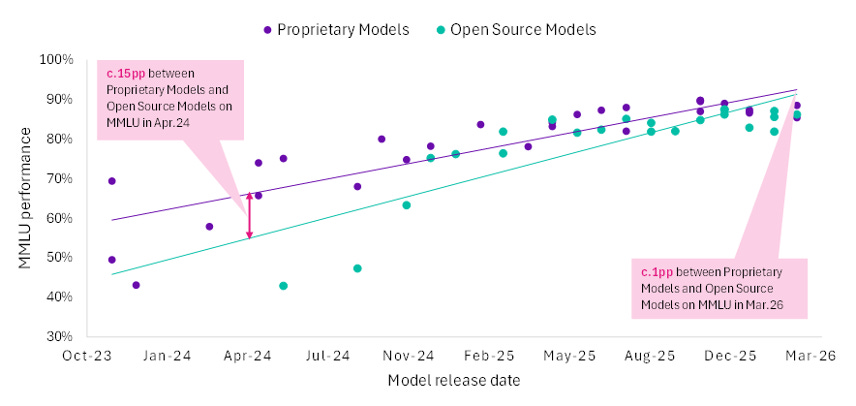

The convergence was as significant as the cost trajectory. On the Massive Multitask Language Understanding (MMLU) benchmark, the standard measure of general knowledge across academic subjects created in 2020, the gap between proprietary frontier models and their open-source alternatives collapsed from approximately 15 percentage points to less than 1 percentage point by late 2025.

Each month brought a new release that narrowed the distance further. DeepSeek, Kimi K2, and Llama achieved near-parity with systems that cost orders of magnitude more to build. Intelligence that was once the exclusive province of three or four laboratories became available to anyone with an API key or a sufficiently capable laptop. When the substrate becomes uniform, differentiation migrates to what is built upon it. This is not a theoretical observation. It is the mechanism that drives everything that follows.

Abundant intelligence is the necessary condition. The discontinuity is what that abundance made possible: machines that act.

Then Machines Became Actors

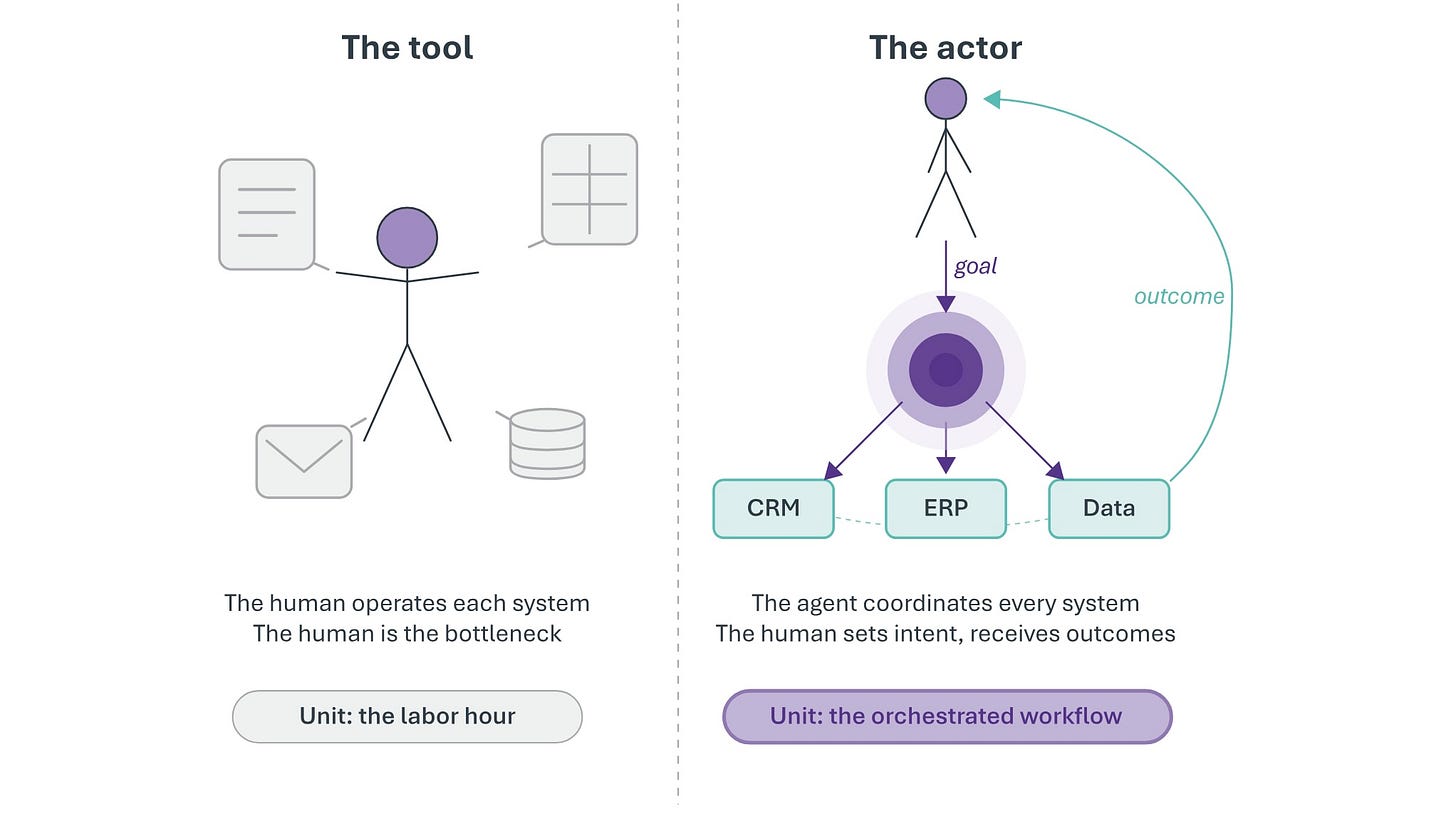

The break occurred when intelligence became sufficient for autonomous action, when machines crossed from processing instructions to pursuing goals. This was a qualitative change in what software is. Every previous generation of software, including every previous generation of AI, operated within the same architectural relationship to human work: the human decided, the tool executed.

The tool could be astonishingly capable. AlphaGo defeated the world Go champion ten years ago. It did not decide to play Go. GPT-4 could draft a legal brief. It did not decide which brief to draft, did not select the relevant precedents from the firm’s case history, did not coordinate with the associate handling discovery, and did not file the brief with the court. The human performed every coordination function. The AI performed one step, brilliantly, and waited for the next instruction.

Between late 2024 and early 2026, that architecture broke.

The evidence is not ambiguous. When Claude Code receives a task such as “resolve this GitHub issue”, it reads the codebase, identifies the relevant files, reasons about the bug, writes a patch, runs the test suite, discovers the patch introduced a regression, rewrites the patch, reruns the tests, and submits a pull request. No human selected the files. No human ran the tests. No human identified the regression. The agent received a goal and autonomously determined how to achieve it.

That is not a better tool. That is a new kind of actor.

The distinction matters because it determines the correct analytical framework. If machines are better tools, the old frameworks apply with modest adjustment: productivity improves, margins expand, the curve steepens. The analyst’s DCF model works. The board’s strategic plan holds. The investment bank’s sector thesis survives with updated assumptions.

If machines are actors, the frameworks break. The unit of production changes. The cost structure of work changes. The competitive dynamics of every industry that employs knowledge workers change. The analyst needs a new model, not updated assumptions.

That is what Kuhn meant by a paradigm shift. For those who still associate “paradigm shift” with Kuhn, the phrase can almost conjure a mental image of gliding from one framework to another. What is often overlooked is an equally important part of Kuhn’s insight into what catalyzed these shifts. Within scientific communities, an existing paradigm would begin to exhibit a series of “anomalies”. These observations were beyond the framework's ability to explain, plunging it into a period of crisis.

The “paradigm shift” often involved a messy, heated middle period full of disagreement over direction, rules, and ways to explain and interpret the new information and dynamics. Many schools of competing thought would emerge trying to define the rules of the new paradigm, a necessary, if sometimes brutal process. While Kuhn spoke of “anomalies” in this, we have chosen a different, precise word to guide us: discontinuity.

To navigate through discontinuity and into the new paradigm, it is important to recognize that the old observations are wrong. They are incommensurable. The old framework cannot produce the new observations. The data that would explain the shift do not fit into any field in the old model.

Consider the shock when software companies lost hundreds of billions in value in early February 2026. Entire sectors were repriced on the assumption that something fundamental had changed. They were right. But the shift was not caused by a single model release or a single company. It emerged from a cascade of changes across the AI stack in cost, capability, infrastructure, coordination, and deployment that arrived within months of one another. Each was significant alone.

Together, they produced the first structural signal of the Agentic Era: intelligence behaving like a commodity. When intelligence commoditizes, value does not disappear. It migrates.

But to where, to whom, and on what terms?

Those questions are the core of this manifesto. Coding was the first domain to cross, but its lessons generalize to any domain involving structured reasoning, tool use, and verifiable outputs. The crossing order is predictable. The crossing itself is not in question.

The views and opinions expressed in this publication are those of the author alone and are based on publicly available information. The expressed views and opinions do not constitute investment advice, a solicitation, or a recommendation to buy or sell any security or financial instrument. The author may hold positions in the securities of companies mentioned. Certain companies referenced may be current or former clients of, or counterparties to, the author or affiliated entities; such relationships will be disclosed where applicable. Past performance is not indicative of future results. To the fullest extent permitted by applicable law, the author does not accept any liability for any loss or damage arising from reliance on this content. Readers should conduct their own independent due diligence and consult a qualified financial advisor before making any investment decision.