The Cerebras IPO Test: $49B May Be Pricing The Wrong AI Inference Economy

The first pure-play wafer-scale is about to go public just as the Inference Economy is reorganizing around orchestration.

Decoding Discontinuity today released a report on Cerebras Systems’ S-1 in advance of its IPO this week for Institutional clients. Find more information on our Institutional Client Services here.

The Cerebras Systems’ IPO is reaching a fever pitch in advance of its expected pricing on May 13, with reports that the company may increase the offering price and number of shares, raising $4.8 billion rather than the original $3.5 billion. The IPO is 20x oversubscribed with a revised target of $48.8B per the most recent filing on May 11th.

The frothy investor demand is understandable. The bull case writes itself: a vertically integrated, technically extraordinary infrastructure company hitting the public markets just as inference demand is exploding. Cerebras leads wafer-scale integration, an industry once dismissed as commercially impractical, with an A-list roster of customers (G42, OpenAI workloads, AWS) that reads like an inventory of where premium inference will live.

That case is not wrong. But it is incomplete.

As I argued in AGNT: The Orchestration Economics Manifesto, the marginal cost of cognition is collapsing on a non-linear curve, machines are shifting from tools to actors, and value capture is reorganizing around the orchestration layer rather than the silicon layer. What has not been widely understood by the markets is the second-order consequence: as token costs collapse, consumption is exploding faster than the economics of centralized frontier inference can absorb.

The industry has already begun to accept the implications of this dynamic and is reorganizing accordingly: The AI economy will not scale on frontier inference alone. It will scale on inference efficiency.

This is why the Cerebras IPO has broad implications for AI infrastructure, extending far beyond the company itself. Cerebras is the first pure-play wafer-scale company to face the public markets at the exact moment its central premise, that scaling frontier inference is the binding scarcity of the AI era, is being quietly inverted by the very platforms that depend on it.

The company is not being priced against this quarter’s demand. It is being priced on the assumption that the next decade will reward which layer of the stack.

That assumption is evolving rapidly even as the IPO arrives.

The Economy Underneath the Story

Training happens once. Inference happens billions of times daily. Training is the arms race. Inference is the GDP.

In the Inference Economy described in the Manifesto, workflows consume compute as their primary variable input. The unit of production shifts from the labor hour to the orchestrated workflow. Industry tracking already shows that inference accounts for more than 60% of AI workloads, and is climbing toward two-thirds by year-end.

Inference hardware retains utility for four to five years. Training hardware depreciates in eighteen months. Inference serves millions of enterprises. Training serves a dozen labs.

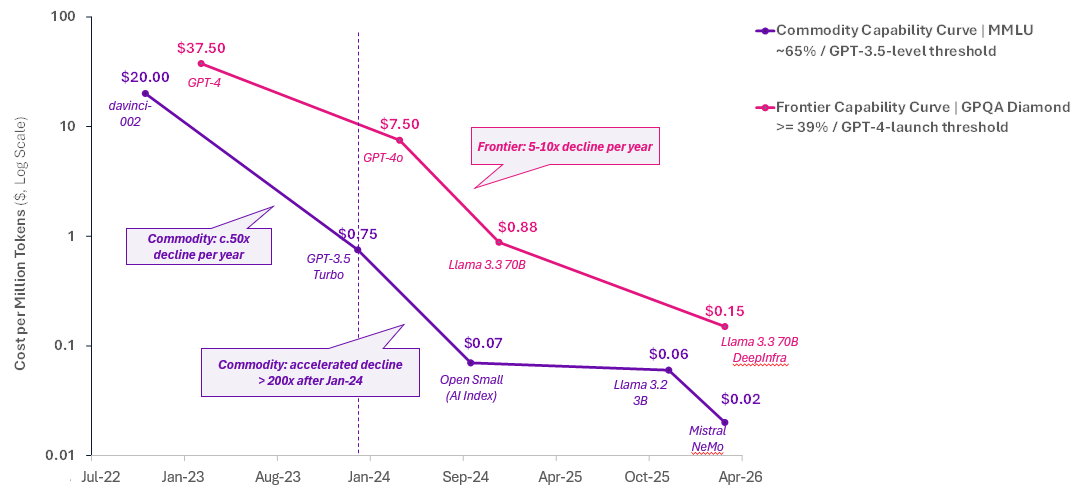

But inference is doing something training never did: Its unit cost is collapsing on a non-linear curve. According to Epoch AI, median inference pricing is falling roughly 50x per year across major benchmarks, with some capability tiers compressing substantially faster.

Even at the frontier layer where Cerebras seeks to differentiate, the economics are changing rapidly. Prices for the highest-performing models on advanced benchmarks like GPQA-Diamond have declined dramatically, while the cost of achieving older capability thresholds continues to compress as smaller and cheaper models inherit performance previously reserved for frontier systems.

As expected, the falling costs initially made use cases more attractive. The problem is that once intelligence is deployed across enterprise workflows, software systems, agentic runtimes, and consumer products, the recurring cost of serving tokens at scale becomes far more significant than the one-time cost of training the model itself. And these total costs are exploding at rates that can quickly overwhelm the falling unit costs.

At the same time, enterprises recognize that not every task requires frontier capability. They require acceptable intelligence delivered at the lowest possible latency and marginal cost. So, they are increasingly motivated to optimize across the stack. Rather than maximizing frontier capability, the trend is toward minimizing the marginal cost of usable intelligence.

That distinction is reorganizing the stack.

The Frontier Lab That Calls the Frontier Less

In April, Anthropic published the Advisor Strategy on the Claude Platform, one of the most significant architectural documents about the inference economy.

The model is a deliberate inversion of the classical sub-agent pattern. Commodity-tier Sonnet or Haiku operates as the persistent execution substrate. Opus operates as the frontier reasoner and is invoked intermittently as an advisor only when the executor hits a reasoning wall. Sonnet + Opus Advisor improved SWE-bench Multilingual while reducing per-task cost. Haiku + Opus dramatically improved BrowseComp at a fraction of the cost of running Opus continuously.

The world's most sophisticated frontier lab is now designing systems whose explicit goal is to call the frontier less. Anthropic’s optimization target is no longer “use the most capable model available.” It is “deploy the most capable model only when necessary, and route everything else downward.”

Even one of the biggest labs is acknowledging that the frontier is too expensive to consume continuously.

Stanford’s Scaling Intelligence Lab and Together AI quantified what this looks like at scale. Across more than 1 million production-style queries distributed across multiple local models and accelerator types, the share of workloads that could be handled locally on laptops and phones expanded dramatically in just two years. Their Intelligence Per Watt metric improved 5.3x between 2023 and 2025. The share of queries consumer-grade hardware can handle rose from 23% to 71%. Hybrid local-cloud routing materially cuts both energy and inference cost relative to cloud-only execution.

To be clear, frontier inference will not disappear. Long-context agentic decode, sovereign workloads, scientific computing, defense applications, and latency-sensitive reasoning will continue requiring high-performance centralized infrastructure. The question is not the existence of that demand. It is the share of total inference demand that it represents once orchestration matures.

NVIDIA Stopped Fighting the Inference Thesis. It Bought It.

NVIDIA is also following this inference optimization route.

For two years, the strongest argument for specialized inference architectures rested on the observation that NVIDIA’s stack was optimized for training. In contrast, inference exposed architectural inefficiencies that generalized GPU clusters handled poorly. For instance, the decode phase of inference is fundamentally memory-bandwidth-bound rather than compute-bound. KV-cache reshuffling, inter-chip communication overhead, and bandwidth inefficiencies constrained performance as models scaled into larger-context and agentic workloads.

That was the foundational thesis behind Cerebras, Groq, SambaNova, and Tenstorrent. The moat was not raw compute performance. It was superior economics in the exact workload regime where agentic inference demand was expected to concentrate.

NVIDIA agreed.

In December, NVIDIA paid roughly $20 billion for Groq’s inference technology, leadership team, and architecture, a non-exclusive license wrapped in an acqui-hire. The disaggregated decode architecture, sold as an alternative to NVIDIA’s foundational CUDA, is now integrated into CUDA through the Agent Toolkit and Dynamo orchestration layer. GPUs handle prefill. LPUs specialize in decode. Both live inside the same runtime, controlled by the same vendor. Customers no longer need to leave the dominant programming environment to access the decode-optimization patterns that specialized vendors previously argued required alternative architectures altogether.

In March at GTC 2026, NVIDIA unveiled Vera Rubin NVL72, which boasted 5x inference performance, 3.5x training performance, 10x lower cost per token, and roughly 10x inference throughput per watt compared to Blackwell. Six new chips, one supercomputer, shipping H2 2026. NVIDIA is no longer competing on training and ceding inference to specialists. It is competing directly for the inference-optimization layer itself.

Then there is Cursor. Last month, the AI coding company struck a deal with Elon Musk’s SpaceXAI for access to the latter’s Colossus training supercomputer in exchange for either a $10 billion payment or an option to acquire the company for $60 billion.

Earlier this year, Cursor published warp-decode, a kernel-level optimization that extracts materially higher bandwidth efficiency directly from NVIDIA hardware. One of the largest real-world consumers of agentic inference in the world hit the exact decode bottleneck that specialized architectures were supposed to solve. Cursor did not migrate to wafer-scale. It did not abandon CUDA. It optimized inside it.

That decision matters more than any benchmark. Customers with the strongest economic incentive to evaluate alternatives are revealing their preferences through their build choices. They are staying within CUDA and squeezing more performance out of the hardware than its vendor has extracted. The software ecosystem is closing the architectural gap that specialized silicon was supposed to monopolize.

Compression From Both Sides

This is the architectural picture facing Cerebras as it goes public.

From the above, the orchestration layer is structurally minimizing the share of workloads routed to frontier inference overall. From below, the incumbent platform is absorbing the optimization stack that previously justified architectural divergence in the first place.

Specialized infrastructure does not disappear in this picture. It compresses. The environments where premium economics remain are durable and narrow. Cerebras is technically extraordinary in this respect because wafer-scale integration remains one of the most ambitious architectural achievements in modern computing, addressing engineering constraints that the industry considered commercially impractical only a few years ago.

But the future economic structure of the inference market will not ultimately be determined by technical superiority in isolated workloads. It will be determined by the proportion of total inference demand that remains dependent on frontier-tier economics once orchestration matures and inference consumption becomes more economically selective.

Against this backdrop, Cerebras’s recent strategic evolution suggests the company understands its shifting position. Over the twelve months preceding its IPO, the company pivoted aggressively toward operating its own inference cloud while consolidating its commercial future around a relatively concentrated set of counterparties: the UAE state-backed AI ecosystem through G42 and MBZUAI, strategic alignment with OpenAI workloads, and integration into the broader AWS ecosystem.

That concentration could prove to be a double-edged sword, either securing premium workloads or making the company overly dependent on a few customers.

For now, however, the primary problem is that the current debate around AI infrastructure remains overly focused on aggregate demand growth, even though aggregate demand growth is no longer the relevant question. AI infrastructure spend will almost certainly continue to rise.

The question to watch in the wake of the Cerebras IPO: which layers of the stack retain pricing power once intelligence allocation and efficiency become more economically important than intelligence generation alone?

The views and opinions expressed in this Website are those of the author alone and are based on publicly available information. The expressed views and opinions do not constitute investment advice, a solicitation, or a recommendation to buy or sell any security or financial instrument.

The author may hold positions in the securities of companies mentioned. Certain companies referenced may be current or former clients of, or counterparties to, the author or affiliated entities; such relationships will be disclosed where applicable.

Past performance is not indicative of future results. To the fullest extent permitted by applicable law, the author does not accept any liability for any loss or damage arising from reliance on this content. Readers should conduct their own independent due diligence and consult a qualified financial advisor before making any investment decision.