The Hyperscaler Reckoning: $700 Billion for a Utility - While the Moats Disappear?

Recent earnings confirmed history's largest AI infrastructure cycle. The real story is a layer-by-layer mutation of tech economics and the sorting of four companies the consensus still treats as one.

TL;DR: The infrastructure cycle is still in the acceleration phase. But for most hyperscalers, Capex is buying a utility that risks commoditization, while the agentic transition is simultaneously destroying the moats of the high-margin businesses those same companies are trying to protect. Meta, Amazon, Google, and Microsoft represent c.20% of the S&P 500.

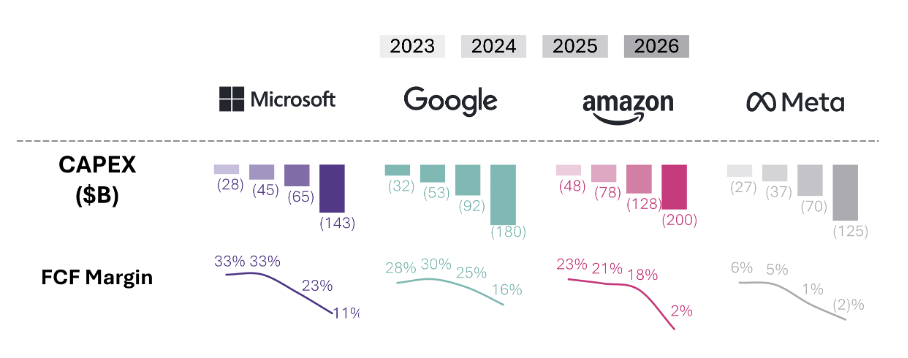

The four largest hyperscalers reported quarterly earnings within hours of each other last week. They each beat consensus and raised 2026 capital spending guidance, committing between $650 and $700 billion to AI infrastructure for the year. That’s nearly double what these companies spent in 2025 and arguably the largest concentrated infrastructure cycle in the technology sector's history. Morgan Stanley extended the count to five (adding Oracle) and raised its projection up to $805 billion this year, up from $765 billion, an amount one analyst noted would be equivalent to all spending by non-tech S&P 500 companies in 2025.

This spending has now persisted long enough to confirm an unprecedented cash-flow crunch. Amazon’s trailing twelve-month free cash flow collapsed from $26 billion to $1.2 billion, a 95% decline. CEO Andy Jassy used his annual shareholders’ letter to defend the trajectory in cash-cycle terms. AWS, he wrote, “has to lay out cash for land, power, buildings, chips, servers, and networking gear in advance of when we can monetize it,” with Capex growth meaningfully outpacing revenue growth in the high-growth phase, while the underlying assets have useful lives of five to thirty-plus years.

The bull case for Capex spending ultimately rests on the argument that returns arrive later, after monetization outpaces the buildout. As Jassy reminded investors in that same letter, AWS investment was being questioned internally as recently as the 2014 operating plan review, when a senior leader opened the discussion by asking, “Tell me again why we’re doing this business?”

The problem is that it’s hard to see when that “after” arrives. In my view, these Capex numbers are set to keep growing as the Inference Economy accelerates. The question of “will the spend pay off?” will be a perennial one.

In this context, the market needs to scrutinize the multiples applied to companies whose valuations represent ~20% of the S&P 500 to determine whether they are consistent with the new reality of hyperscalers in the Agentic Era. That starts with stepping back and recognizing the broader circumstances facing these companies.

The Agentic Era is recomposing the tech stack and challenging the value pockets of the hyperscalers. As a result, they are getting squeezed from above and below.

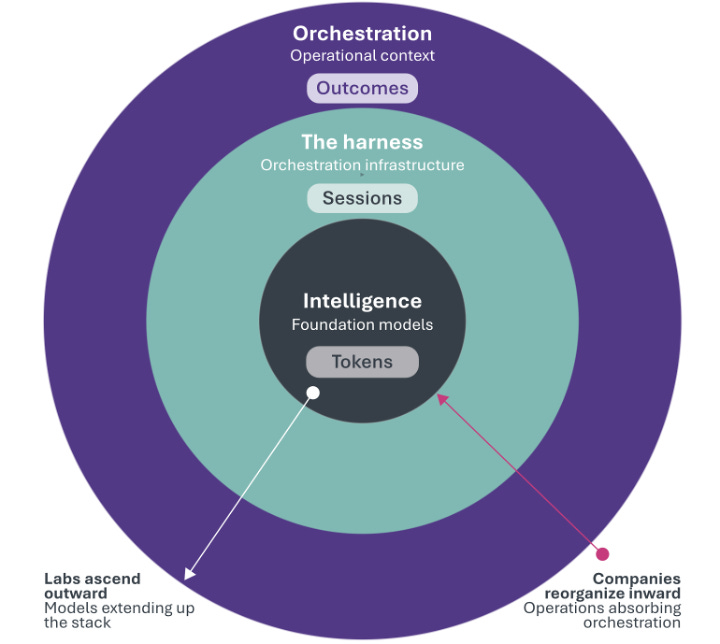

The AI labs are cannibalizing the value that accrues at the infrastructure layer by adding a new layer, the Harness, atop the intelligence itself. All that money being poured into this layer will only produce commodity returns.

Meanwhile, the intelligence they produce threatens the core of the hyperscalers' application value. The agentic paradigm is dismantling the application-layer advantages that have justified their historic valuations.

The architectural and financial profile that earned hyperscalers their historic multiples is mutating. This implies a structural mispricing of a large portion of the market.

However, it would be a mistake to assume that all hyperscalers are doomed or can even be evaluated in the same way. Because that assumes they are spending on the same thing, building the same kind of moat, and facing the same competitive landscape. They are not. The agentic transition is acting on each layer of the technology stack in opposite directions, simultaneously, sorting these companies into different valuation regimes that the consensus has not absorbed.

The financial mutation

For two decades, the defining characteristic of the largest technology companies was capital efficiency, with asset-light platforms converting modest infrastructure investment into extraordinary returns. The cloud was a virtuous cousin of the software business. It was more capital-intensive than pure SaaS but operated at gross margins of 70% and produced the cash flows that made these the most enviable corporate financial profiles in modern markets. This is the financial profile that earned hyperscalers their multiples.

That profile is now in transition. Bloomberg estimated that the four largest hyperscalers would spend ~$650 billion in 2026. Goldman Sachs projected cumulative hyperscaler Capex of $1.4 trillion from 2025 to 2027. That is close to three times the $477 billion spent from 2022 to 2024. Leading hyperscalers have emphasized that they are primarily constrained by power and capacity, not by demand, and have repeatedly flagged supply bottlenecks on recent earnings calls.

Operating cash flow is no longer sufficient to fund the buildout. Combined hyperscaler debt issuance was $121 billion across the Big Five in 2025, compared to an annual average of $28 billion between 2020 and 2024. Morgan Stanley estimated that hyperscalers will borrow ~$400 billion in 2026, double the $165 billion borrowed in 2025. JPMorgan projects $1.5 trillion in AI-related bond issuance over the next five years. In February 2026, Alphabet issued a 100-year bond, the first century bond from a technology company since Motorola in 1997. The $32 billion multi-currency raise was nearly tenfold oversubscribed. Pension funds and life insurers, institutions that match liabilities over generations, bought Google paper at 6.05 percent as if Alphabet were sovereign-grade infrastructure rather than the parent of a search engine.

The hyperscalers have, so far, avoided negative free cash flow. The technical fact is increasingly trivial. Pivotal Research projects Alphabet’s 2026 free cash flow will fall roughly 90 percent, from $73 billion to perhaps $8 billion. Barclays projects Meta’s free cash flow will drop 90 percent in 2026, then turn negative in 2027 and 2028. The accounting reveals the strain. Amazon shortened the useful life of a subset of its servers from six to five years in January 2025, while Microsoft extended the same assumptions in fiscal 2023, adding $3.7 billion to operating income from the change alone. The industry cannot agree on whether its core infrastructure lasts one year or six.

What was once the most capital-efficient sector in modern history has become one of the most capital-intensive. The question of the moat that results from this spend is now acute: who is benefiting from this spend?

Decomposing the value stack

Each of these firms is, in functional terms, a stack of four layers operating under a single corporate roof.

Layer 0 is silicon.

Google’s TPUs are the most advanced (now in their 8th generation), Amazon’s Trainium and Inferentia are gaining traction for inference workloads, while Meta’s MTIA, in partnership with Broadcom, and Microsoft’s Maia remain solid but less differentiated to date. Amazon’s Jessy insisted on the positive contribution of the company’s booming chip business in his latest investor letter: “Our chips business is on fire, changes the economics for AWS, and will be much larger than most think (…) Having our own hotly demanded AI chip opens up many possibilities, but perhaps none larger than the ability to lower costs for customers and secure better economics for AWS. At scale, we expect Trainium will save us tens of billions of Capex dollars per year, and provide several hundred basis points of operating margin advantage versus relying on others’ chips for inference.

Our annual revenue run rate for our chips business (inclusive of Graviton, Trainium, and Nitro-our EC2 NIC) is now over $20 billion, and growing triple digit percentages YoY. To dimensionalize this versus other chips companies, that run rate is somewhat understated by our currently only monetizing our chips through EC2. If our chips business was a stand-alone business, and sold chips produced this year to AWS and other third parties (as other leading chips companies do), our annual run rate would be ~$50 billion. There’s so much demand for our chips that it’s quite possible we’ll sell racks of them to third parties in the future.”

Layer 1 is intelligence.

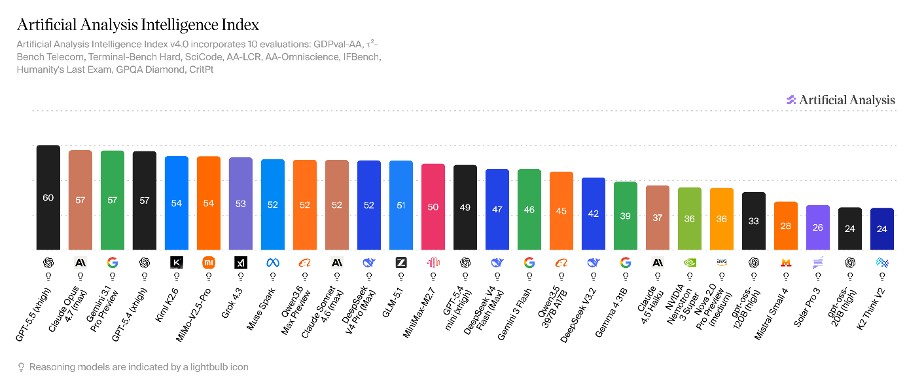

The foundation models. Here, Google is the only company producing frontier intelligence, matching the AI labs and the Chinese open-source competitors. Gemini 3.1 Pro Preview is in the top 3 of Artificial Analysis Intelligence Index, while Meta’s most recent model, Muse Spark, ranks in the top 5, and Amazon’s Nova only ranks 22nd.

Layer 2 is the technical orchestration substrate.

This is what the industry now calls the Harness. The infrastructure that decomposes goals into tasks, delegates them to specialist agents, manages state, and accumulates learning across sessions. Functionally, it is the AI compute layer plus the orchestration runtime that sits immediately above it. The hyperscalers historically assumed they would own this layer, as they owned the SaaS-era developer toolchain. They are discovering that the Harness is parting ways from the compute beneath it and that the margin is traveling with the Harness, not the compute.

Layer 3 is the “application.”

These are the user-facing surfaces where intent originates and value is captured. Search, Office, advertising, retail, consumer hardware, enterprise SaaS.

The agentic transition is acting on these layers in different directions. Layer 0 is becoming structurally important for cost and latency reasons. Layer 1 is consolidating into a small number of frontier providers and commoditizing rapidly underneath them. Layer 2 is the layer absorbing all of the announced Capex, essentially. For three of the four hyperscalers, it is becoming a utility. Layer 3 is splitting between surfaces that survive the transition and surfaces that do not.

The two sections that follow examine each layer.

Layer 2 becomes a utility

The hyperscaler thesis was that the cloud business would absorb AI workloads the way it absorbed every prior generation of software workloads. Rent the compute, sell the developer toolchain that sits on top, capture both layers as a single economic surface. In the SaaS era, this was correct. The cloud and the orchestration software were sold together. The high-margin orchestration tooling was bundled with the lower-margin compute. The hyperscaler captured the full vertical and earned a software multiple on the result.

Layer 2 is the orchestration substrate where the bulk of the announced Capex is landing. It is now being pulled apart from both sides. The model labs are taking the runtime from above. NVIDIA is taking the layer just above the silicon from below.

The labs first: As I argued in Anthropic’s Digital Labor Tax, Claude Managed Agents, launched in early April 2026, is an architectural pivot away from token-based pricing entirely. Anthropic now bundles compute, reasoning, and execution into what I call the Agent Unit: a synthetic colleague billed by the hour. The product runs on existing cloud infrastructure, but the developer never touches it. Anthropic abstracts the substrate into a primitive, charges by the session-hour, and captures the margin between raw compute cost and orchestrated outcome. By owning both the model and the runtime, Anthropic positions itself as the tax collector of digital labor, regardless of whose cloud the labor runs on. OpenAI is building the same architecture under Frontier and Codex.

This is the only path through which AI labs build a durable moat. Intelligence alone is commoditizing. Gemini, Claude, GPT, and the open-source frontier are converging on benchmarks at near-parity. The lab that owns the runtime through which intelligence becomes operational captures the coordination surface, the customer relationship, and the pricing power.

And the labs are not stopping at the runtime. Cowork, Frontier, and the vertical plugins (Claude for Finance, Claude Design) are the labs’ move further up the stack into Layer 3, where intent is captured, and outcomes are delivered. The Harness is a beachhead, not a destination. And this remains true as frontier labs push prices higher.

NVIDIA second: As I argued in NVIDIA’s CUDA Playbook Has a Second Act, the March 2026 Agent Toolkit, which included Nemotron, OpenShell, and AI-Q Blueprint, is the second-act CUDA play, providing a portable, open-source runtime layer immediately above the silicon. The toolkit is model-agnostic and compatible with MCP without depending on it, preventing Anthropic from establishing protocol-level lock-in. NVIDIA is making the bet that if it controls the runtime and the chips, it does not need to own the model or the application.

As a result, the AI compute sold by the hyperscalers, and that is enabled by the unprecedented Capex allocation, could start to resemble a utility as the AI labs and Nvidia capture the actual “AI” margin, with the valuable orchestration layer.

The bull case for the April 29 Capex hike rests heavily on Q1 commentary that AI margins are better than legacy cloud margins. The financial statements support this for now. Microsoft’s commercial cloud gross margin decreased 100 basis points sequentially, AWS operating margin printed at 38%, and Google Cloud at 33%.

This is short-lived. The strong AI margins notably reflect HBM scarcity passed through as price. These are scarcity rents on a window that closes mechanically as new HBM capacity arrives in 2027 and 2028. Strip out the scarcity rent, and AI compute starts to resemble regular compute. The equation does not hold.

There is one structural exception: a hyperscaler that owns its own Layer 1. In that case, the lab and the cloud are the same entity, the Harness is captured internally, and the margin migration that pressures the others does not occur. This is precisely Google’s position. Gemini, TPU, and Vertex Agent Builder all sit inside Alphabet, so the same capital allocation that is being structurally weakened at the other three is structurally reinforced at Google.

Microsoft, Amazon, and Meta are the three hyperscalers without their own Layer 1. Their strategic path forward is to demonstrate value at the Harness level rather than the compute level. That means building orchestration products credible enough to capture the session, the outcome, and the agent-hour, rather than just renting the GPU underneath. Microsoft’s Copilot Studio and AWS’s Bedrock AgentCore are first attempts. Neither has yet displaced the labs in workflows where the lab also offers a Harness.

The arithmetic is unforgiving. Compute infrastructure earning commodity returns supports utility multiples, perhaps 12 to 15 times earnings. The hyperscalers without Layer 1 are currently priced as software companies in the 20-to-30-times range. That premium rests substantially on what we term Layer 3, the application franchise. Which is precisely the layer at risk in the Agentic Era.

Layer 3: when the operator becomes an agent

The application layer was supposed to be the safe ground. Even if Layer 2 was fragmented, Layer 3 was where the historical moats lived: the Office franchise, the Search index, the ad-targeting machine, the retail relationship. The consensus defense of hyperscaler multiples rests substantially on this assumption.

It is correct for some Layer 3 surfaces and demonstrably wrong for others. The dividing line is precise: the moats that survive are those whose value lies beneath the interface. The moats that break are the ones whose value lives in the interface.

The agentic transition changes who operates the system. For the entirety of the prior era, every interface, every workflow, every UX investment was architected for a human operator. The user knew the keyboard shortcuts, navigated the menu, and understood the conventions. The user was trained on Word in school and could not switch to a different word processor without paying retraining costs. The historical Layer 3 moats were anchored in the human operator’s tacit knowledge of the interface, which earned a software multiple precisely because of that lock-in.

When the operator becomes an agent, the moat depends on what sits behind it.

Agents do not need to be trained, do not learn keyboard shortcuts, and are not certified. They operate on any available interface by screenshotting, parsing the DOM, or calling the underlying functionality programmatically. The infrastructure that makes this concrete is now in production: OpenAI’s Operator, Anthropic’s Computer Use, and Google’s Project Mariner (now integrated into other Google products vs. standalone) are general-purpose agents that operate web interfaces directly. Every SaaS application accessible through a browser is now automatable by any party, whether or not the vendor consents. The browser is now a universal, consent-free automation surface for general-purpose AI agents.

Where the moat is the interface itself, that is the end of it. Where the moat is a physical or operational substrate that the interface only renders, agents expand the volume flowing through it.

Microsoft is down 14% year-to-date. It now trades at 24.65x trailing earnings. The consensus sees a cyclical correction and a buying opportunity.

The consensus may be right about the cyclical part and profoundly wrong about the structural part. To understand why, you have to grasp what is actually dying. It is not Microsoft’s software. It is the user interface as a workspace.

Consider how a financial model gets built today. The old way: you open Excel, you build the model, you format the output. Microsoft captures your intent because your hands are on its keyboard. The entire value chain, from input to processing to output, lives inside one company’s interface.

Now consider the emerging alternative. You give an orchestrator a high-level objective. It spawns a swarm of specialized agents. One fetches the data from the SQL database. One runs the analysis in Python. One formats the deck. These agents interact with Microsoft’s APIs, not its interfaces. Excel may still exist in this workflow, but as a rendering layer rather than a work surface. The user interface becomes a status dashboard for work happening elsewhere.

This distinction between a source system, where work begins, and a target system, where finished work is deposited, is the crux of the structural challenge.

Microsoft’s moat was never primarily about the quality of Word or Excel. It was that Microsoft was the source system for enterprise knowledge work. Every workflow started there. What Claude Code, Cowork, and NVIDIA’s OpenShell runtime represent is the migration of source-system authority to the orchestration layer.

GitHub still stores the code, but it has become a target system, the place where the agent deposits the pull request, not the place where the developer forms the intent. Office still formats the presentation, but it is a target system, the place where the agent saves the .pptx, not the place where the analysis happened. The hundred billion dollars Microsoft has invested over decades in user experience becomes a legacy cost rather than a competitive advantage once work happens in the runtime layer, not the interface layer.

It is an architectural shift in where intent is captured. Claude Code reached $2.5 billion in annual recurring revenue within nine months. Cursor crossed $2 billion in three months. These are enterprise products that are redirecting source-system authority away from the incumbents and toward the model providers.

The economic consequences compound under the per-seat pricing model. Agent swarms are designed for autonomous, parallel execution. NVIDIA’s AI-Q hybrid architecture demonstrates that these swarms can cut execution costs by 50% while doing the work of multiple human roles. If a swarm orchestrated by a third party replaces a department of fifty people, Microsoft loses fifty subscription seats. The per-seat model becomes inversely correlated with the technology’s success: more productive AI means fewer humans, which means fewer licenses.

That is a structural headwind that compounds over the next few years, and changes the source of the company’s moat.

The counterweight is distribution

Microsoft’s enterprise distribution surface includes Azure Active Directory as the identity layer, Teams as the communications fabric, Windows on the endpoint, the global enterprise sales force, and the existing CIO relationships. This remains unparalleled and is harder to disintermediate than the Office work surface. Whatever wins at Layer 2 still has to be sold, deployed, and governed inside enterprises that already run on Microsoft’s identity and security plumbing. Copilot is an interface play and will be contested. Azure-as-the-distribution-substrate-for-other-people’s-agents is a different proposition, and one that an Anthropic locked into an $30 billion Azure contract through 2029 is materially funding. The question for Microsoft is whether the distribution surface compounds fast enough to offset the work-surface erosion. And whether the business model changes that result from it prove to be convincing.

Take Google now, for example.

Google splits internally. The algorithmic infrastructure beneath search includes the index, the crawl, and the rank. It is agent-hardened because the agent has to query the index to answer the user’s question. The advertising business model layered on top is structurally exposed.

Pew Research found that Google’s AI Overviews had already reduced link click-through rates from 15% to 8%. Zero-click searches now account for 56% to 69% of all queries since AI Overviews launched. When an agent reads the AI Overview and synthesizes an answer, the sponsored link below it is never seen. The advertising auction prices the click. The click is disappearing.

For now, AI features are actually helping drive more queries and longer sessions (AI Mode queries are ~3× longer). Google has also started placing ads above, below, and sometimes within AI Overviews and testing them in AI Mode. So the ad business isn’t collapsing. It’s adapting and still printing money. The structural risk, however, remains unchanged.

The bifurcation produces an unusual investment characteristic: Google is the only hyperscaler with both the structurally strongest Layer 1 position (Gemini at the frontier, TPU integrated) and a structurally exposed Layer 3 revenue line (search ads). The capital allocation for the first is being underwritten by the second's cash flow. The transition risk is whether Gemini-based monetization (Vertex, agentic commerce, enterprise) ramps fast enough to offset the slow erosion of CPCs in core search. The bull case is that it does, and that Google ends up with a Layer 1+2 franchise that no other hyperscaler can match. The bear case is that the erosion runs faster than the offset.

The right question

The hyperscalers built the infrastructure used to train the models. They provided the cloud capacity, the data pipelines, and the GPU clusters. They also invested billions in the AI companies that now threaten their application-layer businesses. Amazon and Google are among Anthropic’s largest backers. Microsoft funded OpenAI with $13 billion. They are, in the most literal sense, raising the children who are pushing them out of the house.

And yet, Anthropic is one of Microsoft’s largest Azure customers. The emerging orchestrators depend entirely on the hyperscalers’ infrastructure. Anthropic alone has contracted approximately $80 billion in cloud costs through 2029. If Anthropic succeeds in capturing the orchestration premium from Microsoft’s application businesses, Microsoft simultaneously loses high-quality application revenue and becomes increasingly dependent on lower-quality infrastructure revenue. The money circulates but changes character. It enters as orchestration. It exists as plumbing. The parent funds the child who displaces it. The child pays rent on the way out.

NVIDIA’s Agent Toolkit highlights the paradox. It makes cloud infrastructure fungible for agent swarms by enabling any workload on any cloud, routed on millisecond-level spot pricing. Yet, NVIDIA erodes the switching costs that justify premium cloud pricing. Good for NVIDIA in the short term: more orchestrators, more GPU demand. Potentially catastrophic in the medium term: if the infrastructure built with those GPUs earns commodity returns, the incentive to keep buying GPUs at premium prices evaporates. Everyone is building the road that leads to someone else’s destination.

The Capex debate, as currently conducted, asks whether the spending is too much or too little. It is the wrong frame. It assumes the spend is buying the same thing across companies. Once the spend is decomposed by layer, the question becomes whether the layer being purchased holds its margin in an era where agents, not humans, are the operators. And whether the company doing the spending owns the adjacent layers needed to make the spending defensible.

The answer is not the same for the four hyperscalers. Google owns Layer 1, captures the Harness internally, and pays for the buildout with a Layer 3 revenue line that is eroding but not collapsing. Amazon owns a fulfillment substrate that agents amplify, and its Layer 0 chip business is now monetizing internally at scale. Meta owns an attention substrate (Layer 3) that is largely agent-resilient, with a narrower exposure to commerce and an open-source hedge. At Layer 3, Microsoft owns a distribution surface that compounds and a work-surface franchise that does not.

The market is currently pricing all four as if Layer 2 commoditization and Layer 3 erosion hit them symmetrically. They will not.

The recognition the framework predicts has likely been delayed by one specific gap: there is no clean public-market comparable for an integrated Layer 1+2+3 platform that is not also a hyperscaler. Anthropic’s reported preparation for an October 2026 IPO matters here. A liquid, priced comparable for an orchestration company expanding from the Harness into the application layer, will create a reference point that does not currently exist. When it arrives, the hyperscaler multiples will be repriced against it, not in isolation.

The Capex was never the question. The question, properly asked, is which layer the Capex is buying, whether that layer holds its margin once the operator shift completes, and whether the company doing the spending owns the adjacent layers needed to make the spending defensible. For most of the announced Capex at three of these four companies, the answer is: only partially. That is what the multiple has to absorb.

The recognition has not arrived in full. The conditions for its arrival are now in place.

For the broader framework: Orchestration Economics lays out the value-capture logic; The AGNT Manifesto places this hyperscaler reckoning inside the wider thesis on how the Agentic Era reorders enterprise value.

The views and opinions expressed in this publication are those of the author alone and are based on publicly available information. The expressed views and opinions do not constitute investment advice, a solicitation, or a recommendation to buy or sell any security or financial instrument. The author may hold positions in the securities of companies mentioned. Certain companies referenced may be current or former clients of, or counterparties to, the author or affiliated entities; such relationships will be disclosed where applicable. Past performance is not indicative of future results. To the fullest extent permitted by applicable law, the author does not accept any liability for any loss or damage arising from reliance on this content. Readers should conduct their own independent due diligence and consult a qualified financial advisor before making any investment decision.