OpenAI at $730 Billion: The Clouds Are Forming

A record raise, a $218B projected cash burn, and a shift from training monopoly to inference competition. The story isn’t one company. It’s the risky capital architecture underpinning the AI economy.

OpenAI’s record-setting $110 billion funding round is packed full of revelations about the investors who hedged, the losses that necessitated it, and the competitive shift that threatens it. These looming risks are about more than one company. It’s about the fragility now embedded in the financial architecture of the entire AI economy.

Last October, I published an analysis titled “King Sam and AI Circularity.”

The argument was that hundreds of billions of dollars in AI infrastructure financing were flowing through circular deals where customers are suppliers, suppliers are investors, and all roads lead to OpenAI. The greatest risk, I wrote, was not that AI fails, but that the single company everyone had backed would fail to build a defensible moat, triggering contagion that strands capital and stalls the buildout before the transformation completes.

“You can be bullish on AI and bearish on this financial architecture,” I argued. “They’re separable propositions.”

Five months later, that financial architecture has been stress-tested. It t has shown cracks in places I did not expect.

On Thursday last week:

NVIDIA reported the largest, cleanest earnings beat in semiconductor history. The stock fell 5.5%. The numbers were perfect, but the reaction was not about Nvidia itself, but about Nvidia as a symbol of the “AI trade”, about the huge AI CapEx numbers (from Nvidia’s customers), and whether the revenues would show up in a timeframe compatible with public markets’ expectations.

CoreWeave, the GPU cloud company at the center of the AI infrastructure buildout, reported revenue growth of 168%. The stock crashed 18.5%.

OpenAI announced $110 billion in new capital at a valuation north of $730 billion. It is the largest private funding round in history. It is also a very high valuation: at $13 billon of revenue in 2025, that is ~56x revenue. Anthropic’s latest valuation at ~27x pales in comparison.

Record performance. Record sell-offs. Record capital raise.

These are not three separate stories. They are one story. It is a story about what happens when the financial architecture built for one paradigm - a training-compute monopoly - collides with the emergence of another: an inference-compute oligopoly where the positions are not established, the moats are shallow, and the company at the center of the web projects $218 billion in cash burn before turning a profit.

What makes this moment different is not the scale of the numbers. Silicon Valley has seen large rounds before. It has seen bubbles before. What it has not seen is a single private company sit at the center of a capital web this large, one that links sovereign wealth, hyperscalers, chipmakers, leveraged cloud intermediaries, and public equity markets into a single interdependent system.

The AI boom is no longer a collection of startup bets. It is a coordinated financial architecture.

When capital structures become architectures, fragility changes form. Risk is no longer isolated to the failure of an individual company; it becomes systemic because cash flows, contracts, and balance sheets are braided together. The question is no longer whether OpenAI can build better models. It is whether the structure built around it can withstand a shift in the underlying economics of compute.

That shift is now underway.

In my “Two Tales of Compute” series, I drew a sharp line between training compute and inference compute. Training is the capex: massive, synchronized GPU clusters running for weeks to produce a model. Inference is the Opex: the per-query, per-token cost of serving that model to users, billions of times daily.

Training is where the arms race lives. Inference is where the economics resolve.

This distinction always mattered. After this week, it is the only distinction that matters.

To understand what this week actually revealed, we have to trace the capital flows, follow the compute, and decode the shifting economics beneath them.

The $110 billion round nobody fully backed

The OpenAI round reveals more than suggested on the surface.

On Thursday, OpenAI announced it had raised $110 billion at a valuation north of $730 billion. The headline was triumphant. The details were not.

NVIDIA’s contribution landed at $30 billion. That figure requires context.

In September 2025, NVIDIA signed a letter of intent to invest up to $100 billion in OpenAI, contingent on deploying 10 gigawatts of NVIDIA systems. It was the centerpiece of a relationship that appeared to define the AI era: the dominant chipmaker backing the dominant model provider, each reinforcing the other.

By January, the Wall Street Journal reported the deal had “stalled.” NVIDIA CEO Jensen Huang told people privately that it had never been binding. He criticized what he called a lack of discipline in OpenAI’s business approach and voiced concerns about the competitive threat posed by Google and Anthropic. In early February, he confirmed publicly that $100 billion “was never a commitment“ and that Nvidia would “invest one step at a time.”

The $30 billion investment represents a 70% retreat. It arrived alongside a $10 billion NVIDIA investment in Anthropic and $2 billion in CoreWeave. The message was unmistakable: NVIDIA is diversifying away from a single-counterparty bet on OpenAI.

Amazon committed $50 billion, the largest investment it has ever made in any company. But only $15 billion is upfront. The remaining $35 billion is contingent on milestones that, per The Information, are tied to OpenAI either achieving artificial general intelligence or completing an IPO.

The single largest commitment in the largest private round in history hinges on a scientific breakthrough that no one can define, and for which no one can provide a comfortable timeline.

Microsoft, which has been OpenAI’s anchor financial supporter since 2019 with over $13 billion committed across multiple rounds, did not participate.

Three of the entities that understand OpenAI’s economics most intimately each sent a different signal, and none of them was unqualified confidence.

What makes this harder to dismiss is the other side of the ledger.

NVIDIA and Amazon are not merely investors in OpenAI. They are among its largest commercial partners. As part of the Amazon deal, OpenAI’s total AWS commitment expanded to approximately $138 billion over eight years, making it one of Amazon’s single largest cloud customers. William Blair estimated the arrangement could deliver roughly $17 billion annually to AWS, about 11% of its expected 2026 revenue. NVIDIA, of course, sells OpenAI the GPUs it consumes at an industrial scale.

These are not arm-length transactions. They are circular flows where investors are also suppliers, suppliers are also customers, and capital moves in loops that make it genuinely difficult to separate commercial demand from financial engineering. Both companies had powerful strategic reasons to participate in this round, with little to do with OpenAI’s intrinsic value as an investment.

Even so, both hedged.

I want to be very clear about something. I do not question OpenAI’s performance as a model provider. Codex, its multi-agent software engineering platform, has reached over a million weekly active developers and represents real product-market fit. The GPT-5.x frontier series achieves state-of-the-art results across multiple professional benchmarks. OpenAI Frontier, the enterprise agent platform now distributed exclusively through AWS, is a strategically smart move.

These are serious products from a serious technical organization.

But OpenAI’s own financial projections tell a specific story about where the money comes from. And it is overwhelmingly a consumer story.

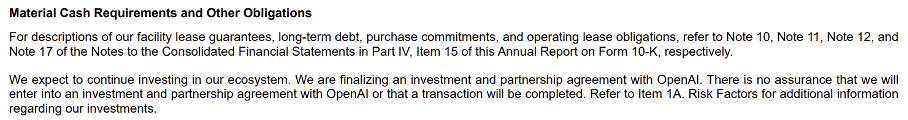

In 2025, ChatGPT subscriptions generated roughly 75% of the company’s $13.1 billion in revenue. Enterprise contributed approximately $2 billion. API revenue crossed $1 billion. By 2030, OpenAI projects consumer revenue at around $150 billion — still over half the total - with enterprise at $70 billion and API at $47.5 billion.

At every point in the forecast, consumer revenue remains the dominant engine.

The monetization strategy for that consumer base now rests substantially on advertising. Sam Altman called advertising a “last resort“ as recently as May 2024. By January 2026, OpenAI was testing targeted ads in ChatGPT for users on its free and Go tiers. Internal projections show $1 billion from ad monetization this year, scaling to $25 billion by 2029.

These are ambitious numbers for a model that no AI company has proven works. Google, which generates over $200 billion annually from an advertising business built over two decades of infrastructure, only announced plans to bring ads to Gemini this year. That validates the direction, perhaps, but also creates formidable competition.

Anthropic went the opposite way entirely, running a Super Bowl ad promising that Claude would remain ad-free.

Meanwhile, ChatGPT’s market share has declined from 86.7% in January 2025 to roughly 64.5% by early 2026. Google Gemini’s monthly active users grew 30% over the August-to-November period while ChatGPT’s grew 6%. Altman issued an internal “code red” memo in December, urging staff to improve the product in response to Google’s advance. The roughly 900 million weekly active users provide scale, but engagement is shallow: 80% sent fewer than 1,000 messages all year.

Strong products. Serious competition. A consumer-heavy revenue mix dependent on unproven monetization. And against that backdrop, a cost structure that defies historical comparison.

$218 billion before breakeven

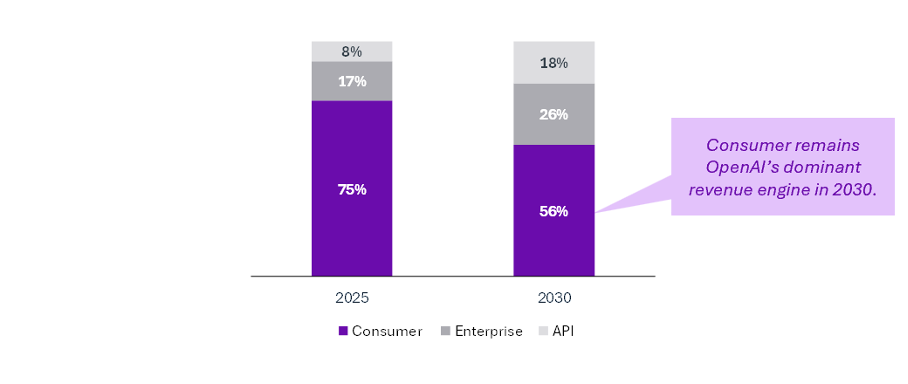

OpenAI’s latest internal projections, reported by The Information in February 2026, describe a loss trajectory unlike anything in startup history.

Net cash burn of $25 billion this year. $57 billion in 2027. $85 billion in 2028. $51 billion in 2029. Positive cash flow does not arrive until 2030. If it arrives. Cumulative burn over the next four years: $218 billion. Cumulative compute spending through 2030: $665 billion. HSBC estimated that OpenAI “needs to raise at least $207 billion by 2030 so it can continue to lose money.”

This bears repeating in context.

Before OpenAI, the largest cumulative startup losses in history were Uber's, at roughly $33 billion. OpenAI’s projected losses through 2029 of $218 billion would amount to just over six and a half times Uber’s all-time total.

The Manhattan Project cost about $30 billion in today’s dollars. The Apollo program cost $288 billion over thirteen years. OpenAI projects $665 billion in compute spending (encompassing training and inference costs) in roughly five years, per The Information.

And the forecasts keep deteriorating.

Cumulative cash burn estimates have escalated from $34 billion in Q1 2025 projections to $115 billion by Q3 2025, and now to $218 billion. That’s a $111 billion deterioration in under a year. Gross margins dropped from 40% in 2024 to 33% in 2025, well below the company’s own 46% target, as inference costs quadrupled to $8.4 billion. As a reference point, and despite the difference in business models, successful SaaS businesses maintain margins above 70%.

This is the distinction I keep coming back to. Investors are not financing a technology. They are financing a company.

The technology will persist regardless of what happens to OpenAI’s balance sheet. Open-source models will proliferate (DeepSeek V4 should arrive this week). Anthropic, Google, Meta, and a growing roster of Chinese labs are all building frontier-capable systems. The question is whether the financial architecture constructed around this company can withstand the strain, or whether its eventual restructuring sends shocks through an ecosystem that has become dangerously interconnected.

Feeling the burn

Follow the money one step further, because much of what OpenAI burns flows to companies that reported earnings this same week. The results were revealing.

NVIDIA’s quarter was, by any historical measure, superlative. $68.1 billion in revenue, up 73% year-over-year. Data center revenue is at a record $62.3 billion. Next-quarter guidance of $78 billion, beating consensus by over $5 billion. Morgan Stanley called it “the largest, cleanest beat and raise in the history of the semis industry.” The stock fell 5.5% the next day, wiping out $260 billion in market capitalization. That’s Nvidia’s worst single-session decline since April 2025.

The sell-off tells you where investor anxiety has migrated. The market is no longer debating whether Nvidia can sell GPUs. It is increasingly debated whether Nvidia’s growth should be discounted for circularity and whether this whole AI build-out makes sense.

PitchBook data shows Nvidia deployed $23.7 billion across 59 investments in its own customers in 2025 alone, up from roughly $1 billion the prior year. Tomasz Tunguz calculated that these commitments total approximately 67% of annual revenue. That’s 2.8 times larger, relative to revenue, than Lucent’s customer-financing exposure before the dot-com bust. Fortune reported that all the money Nvidia invested in CoreWeave came back as revenue. Half of the data center revenue comes from just three customers.

When a supplier finances its customers at this scale, 73% growth is not self-evidently the same thing as 73% organic demand.

CoreWeave’s numbers were starker.

Full-year revenue of $5.13 billion, up 168%, but a net loss of $1.17 billion, and total debt tripled to $21.37 billion. The debt-to-equity ratio: 894%. Interest expense: $1.23 billion. Levered free cash flow: negative $5.27 billion. The company is guiding capital expenditure of $30-35 billion for 2026 and reportedly seeking an additional $8.5 billion loan. Much of its debt is secured by GPUs at roughly 14% interest rates - triple investment-grade corporate debt - with depreciation schedules assuming six-year useful lives for hardware that Nvidia’s release cadence renders functionally obsolete in two to three years.

The stock crashed 18.5%.

Microsoft accounts for 62% of CoreWeave’s revenue. Direct OpenAI contracts account for another $22.4 billion. And then things get muddy because much of Microsoft’s reserved capacity supports OpenAI workloads, so some subset of that is likely also coming via CoreWeave.

The structural comparison analysts keep reaching for is not AWS in its early days. It is the telecom equipment leasing deals of the late 1990s. Except that today’s customers burning through the leased capacity are themselves unprofitable startups rather than established telecoms. The lack of complete clarity and the concentration among a handful of large customers naturally makes investors nervous.

From training monopoly to inference oligopoly

Now we come to the part of the story where the risk starts to compound.

Everything I have described - the hedging by Nvidia and Microsoft, the conditional capital from Amazon, the unprecedented losses, the circular financing, the leveraged intermediaries - all become considerably more concerning when set against the competitive shift that is underway in AI compute.

The financial architecture surrounding OpenAI was built for a world of training-compute monopoly, where Nvidia’s dominance was essentially uncontested. In training, that remains largely true. NVIDIA’s CUDA ecosystem, built over twenty years of development and four million developers, creates switching costs that are close to insurmountable. NVIDIA controls over 90% of training compute.

The moat is real. But the workloads are migrating.

Inference now accounts for roughly half of all AI compute, up from a third in 2023, and Deloitte projects it will reach two-thirds by the end of this year. This is the shift to what I have called the inference economy, where compute becomes the primary factor of production, where token costs substitute for labor costs, and where the economics of AI resolve not in the training cluster but in the serving infrastructure.

The change in compute workloads and the resulting hardware obsolescence were among our key concerns when CoreWeave went public last year:

“Beyond GPU-specific risks, it’s possible that AI workloads may evolve toward different hardware profiles than currently anticipated. Specialized AI chips (ASICs) might begin outperforming GPUs for specific workloads, creating a scenario where CoreWeave’s investments become misaligned with market demand. In both this outcome and the hardware obsolescence case described above, the company could face a surplus of hardware, weakened collateral for its debt, and a significant cash requirement to acquire new hardware aligned with evolving market demands. In such circumstances, access to capital becomes vital for survival.”

-- (Extract CoreWeave S-1 teardown, Decoding Discontinuity, March 2025)As I explored in “Two Tales of Compute”, training happens once; inference happens billions of times daily. The business model lives or dies on inference economics.

When it comes to inference, Nvidia is not a monopolist. It is one competitor among several in a market where positions are far from established.

The evidence of this vulnerability was hard to miss last week.

On Tuesday – just two days before Nvidia’s record earnings - AMD and Meta announced a deal worth up to $100 billion: six gigawatts of AMD’s MI450 GPUs for Meta’s AI infrastructure, with AMD issuing performance-based warrants for up to 160 million shares. It mirrors the AMD-OpenAI deal from October 2025, also for six gigawatts. Between the two agreements, AMD now holds 12 gigawatts of committed orders.

Meta CEO Mark Zuckerberg’s language was pointed: the partnership is about “efficient inference compute“ and diversifying Meta’s supplier base. Analysts noted that Nvidia’s CUDA moat is considerably narrower in inference than in training. Microsoft was reportedly building toolkits to translate CUDA code specifically for AMD GPUs to cut inference costs.

Google’s TPU v7 Ironwood, the first TPU built specifically for inference, delivers a 44% lower total cost of ownership than Nvidia’s GB200, according to SemiAnalysis - hardly a bearish source on Nvidia. Anthropic has committed to over a million Ironwood chips. Midjourney moved its inference from Nvidia to Google TPUs, cutting monthly compute costs by 65%. Amazon’s Trainium claims 30-40% better price-performance for inference workloads. In a Japanese AWS deployment, 12 of 15 organizations chose Trainium over Nvidia.

This is no longer a training-GPU monopoly. It is an inference-GPU oligopoly, and the positions within it are being contested right now, with hundred-billion-dollar deals, by companies with the resources and the strategic incentive to erode Nvidia’s pricing power in the workload category that is becoming the majority of all AI compute.

And it is not only the hyperscalers.

The same week OpenAI closed its $110 billion round, a 24-person Toronto startup called Taalas announced it had raised $169 million, bringing its total funding to $219 million. Taalas does something architecturally radical: etch AI model weights directly into silicon, eliminating the memory-fetch bottleneck that throttles every GPU inference stack in existence. Its first chip, the HC1, runs Meta’s Llama 3.1 8B at 17,000 tokens per second. That’s 73 times faster than Nvidia’s H200, at a tenth of the power, built by a team that spent $30 million over two and a half years. Fabricated on TSMC’s 6-nanometer process, each design moves from model weights to working silicon in roughly two months.

Taalas is not an Nvidia competitor in the way AMD or Google are. It is something more structurally significant: evidence that inference is entering its specialization era, where the economics increasingly favor purpose-built silicon over general-purpose GPUs. Of course, Taalas is still an early-stage startup. But when a two-dozen-person team can deliver orders-of-magnitude inference gains over a $30,000 GPU, the moat around general-purpose compute is not what it was.

Conclusion

The tension now embedded in the AI economy is structural, not cyclical.

A financial system was constructed around the assumption that training-compute dominance would anchor durable monopoly economics. Instead, the center of gravity is shifting toward inference, where pricing power is contested, specialization is accelerating, and capital intensity remains extreme. The result is an architecture carrying historic levels of projected burn, circular capital flows, supplier concentration, and leverage. This is all happening at the precise moment the underlying economics are becoming less certain.

The technology is advancing rapidly. The question is whether the capital structure surrounding it is evolving just as fast.

None of this determines the outcome. OpenAI could execute, margins could improve, and inference economics could stabilize in its favor. But the range of plausible futures is widening, not narrowing.

If the transition from training monopoly to inference competition accelerates, the implications will extend beyond a single balance sheet, reshaping how AI infrastructure is financed, who captures value in the stack, and how much risk public markets are willing to underwrite.

The future of AI is unlikely to hinge on one company. But the financial system built around one company may yet hinge on how this transition unfolds.

Disclaimer: This post reflects the author’s opinions and analysis based on publicly available information. It is not investment advice or a recommendation to buy, sell, or hold securities. Readers should conduct independent research and consult financial advisors before making investment decisions.