Anthropic Claude Code Leak: Decoding Its Blueprint for the Orchestration Graph

512K lines of leaked code reveal a domain-agnostic AI orchestration engine, self-rewriting memory, and the foundations of synthetic colleagues poised to reshape enterprise value and workflows.

Anthropic’s accidental leak wasn’t a security story so much as a strategy document written in TypeScript. What those 512,000 lines reveal is an AI coding tool whose underlying architecture is far more general than the product it currently serves - a domain-agnostic orchestration harness equipped with self-rewriting memory, an unshipped autonomous daemon called KAIROS, and multi-agent delegation models that are already shipping alongside more ambitious coordination capabilities still sitting behind feature flags. The architecture’s most consequential implication is that orchestration and synthetic colleagues are not separate phenomena but two consequences of the same engineering, linked by a causal chain that runs through the memory system now visible in the source - orchestration produces operational intelligence, operational intelligence absorbs the domain expertise moat, and the absorbed moat is precisely what qualifies the agent to perform the work autonomously. One architecture, two consequences.

Anthropic’s 2026 momentum got a sharp reality check with the shocking news that it had inadvertently leaked the complete internal architecture of Claude Code via a routine software update. All the secrets of the product that had catapulted Anthropic to front-runner status were suddenly spreading like wildfire across GitHub repositories worldwide, despite the company’s aggressive takedown efforts.

The embarrassment and the threat to its expected IPO later this year became the story. But there was another, more compelling revelation revealed by all those lines of code: the architecture.

Flash back to a year ago, as headlines obsessively tracked the model race and benchmark leaderboards. I argued that the real competition determining who captures value in the AI economy was not about who built the best model. It was about who controls orchestration: the layer that receives intent, coordinates execution, and compounds the learning from doing both.

Step one toward winning the orchestration layer: the “Coding Wedge.” This was the term I used to describe how coding offered the right blend of structure and capabilities to be the best early use case for agentic AI and orchestration. And it was clear, almost from the start, that Anthropic had built a superior tool with Claude Code. As the market assumed last year that OpenAI was building an insurmountable overall market-share lead, I kept watching as Anthropic widened the gap in the enterprise.

By the fall, those advantages began to compound so rapidly that most observers were caught off guard. From the end of 2025 through the start of 2026, Anthropic released new products and updates, such as Claude Cowork, at a relentless pace. It has become such a presence that the announcement of niche plugins in early February triggered the infamous $285 billion SaaSpocalypse software selloff.

Here we are, almost a year later, and Anthropic is ascendant. It has just hit $30B in run-rate revenue, up from $9B at the end of 2025. And yet, the wedge is not a moat. It has established an early beachhead at the orchestration layer, but no one’s position there is secured.

So the question now is: what’s next?

As it turns out, the Claude Code leak reveals what comes after the wedge and how the orchestration layer is evolving. It shows how one lab is building durable control and where value is migrating in the Agentic Era. These may sound like technical details, just lines of code, after all, but they’re not.

Part of the problem with the SaaSpocalypse wasn’t just an overreaction to a niche product. It was a sweeping judgment that treated all software companies as equally vulnerable. The emerging rules of Orchestration Economics will require a more sophisticated approach that starts with knowing the right questions to ask and understanding how that maps onto the new paradigm.

In this case, Claude Code provides some important new details of that map and how we need to shift our thinking about orchestration beyond the notion of a layer. The battle is no longer about whether orchestration matters.

It is about who becomes the center of what I will call the “orchestration graph.”

The Orchestration Graph

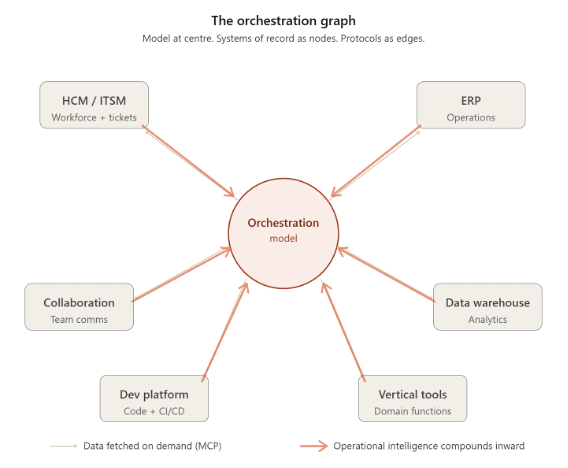

An orchestration graph is the architecture that emerges when a model becomes the center of an enterprise’s work, rather than a tool at its periphery.

The model does not own the enterprise’s data. Through open protocols such as Anthropic’s Model Context Protocol (MCP), the model can reach outward to the CRM/ITSM/HCM for customer/IT/HR data, the ERP for operational records, and the data warehouse for analytics. It calls the vertical tools for domain-specific functions.

The systems of record become a node in the graph. The model is the center. Protocols are the edges. Data flows outward on demand, is processed, and the result is returned. The operational intelligence the model builds from coordinating these workflows flows inward and compounds at the center.

Each system of record sees only its own transactions. The orchestrator sees the workflow that connects them all. Over time, that cross-system understanding becomes the most valuable context in the enterprise. It belongs to whoever occupies the center of the graph.

The Claude Code leak makes visible, for the first time, the architecture of a system designed to occupy that center.

The leaked codebase reveals a domain-agnostic orchestration harness. This is a general-purpose infrastructure for coordinating any structured workflow, deployed first through code.

The leaked tool registry contains over 40 capabilities: file operations, web search, code analysis, task management, team coordination, scheduling, skill loading, and multi-agent delegation. A sub-agent system supports three delegation models graduated by autonomy: Fork for bounded subtasks, Teammate for autonomous agents with their own persistence, Worktree for fully independent parallel workstreams. A hook system with over 25 event types intercepts, denies, rewrites, or transforms any action before or after execution. A remote planning runtime extends to 30-minute horizons with live monitoring.

These are the primitives of general-purpose work orchestration. Decompose a goal. Delegate to specialists. Enforce policy. Coordinate. Verify. Consolidate what was learned. None of this is specific to software engineering.

The same architecture that processes pull requests can process insurance claims or compliance reviews, using different tools loaded into the memory system, but with identical infrastructure.

The coding wedge was never a go-to-market tactic. It was an architectural decision baked into the codebase: build a general-purpose harness, deploy it where verification is tightest, accumulate depth, then extend.

Claude Cowork is the extension to non-developers. It runs the same harness with different tools. The plugins that triggered the SaaSpocalypse are small domain-specific extensions on a very large, domain-agnostic foundation. The architecture was designed for generality. The deployment was designed for specificity.

The leak proves both were intentional.

The orchestration graph creates a different kind of gravity from what technology platforms have accumulated before. The traditional moat in enterprise software was data gravity, the sheer weight of transactional records that made migration painful.

The orchestration graph produces “understanding gravity”: the accumulated, cross-system operational intelligence that no individual application can capture, consolidate, and refine session by session. These switching costs are cognitive, not contractual. The longer the orchestrator operates at the center, the deeper the gravity, and the harder it is to displace the position.

The graph is not exclusive to model providers. Software incumbents sitting on decades of canonical data possess context gravity that no lab can replicate from zero. An incumbent that builds its own orchestration layer - and positions itself as the center of a domain-specific graph has a structural advantage.

The question is speed. The graph’s gravity compounds with every interaction. The window narrows with every session the model provider processes inside the enterprise.

The race is context depth defending against operational intelligence ascending. The leaked architecture shows the ascending side is further along than most incumbents have assumed.

How Anthropic Is Building the Gravity

The domain-agnostic harness is the skeleton of the orchestration graph. What makes the position at the center compound is three capabilities visible in the leaked code.

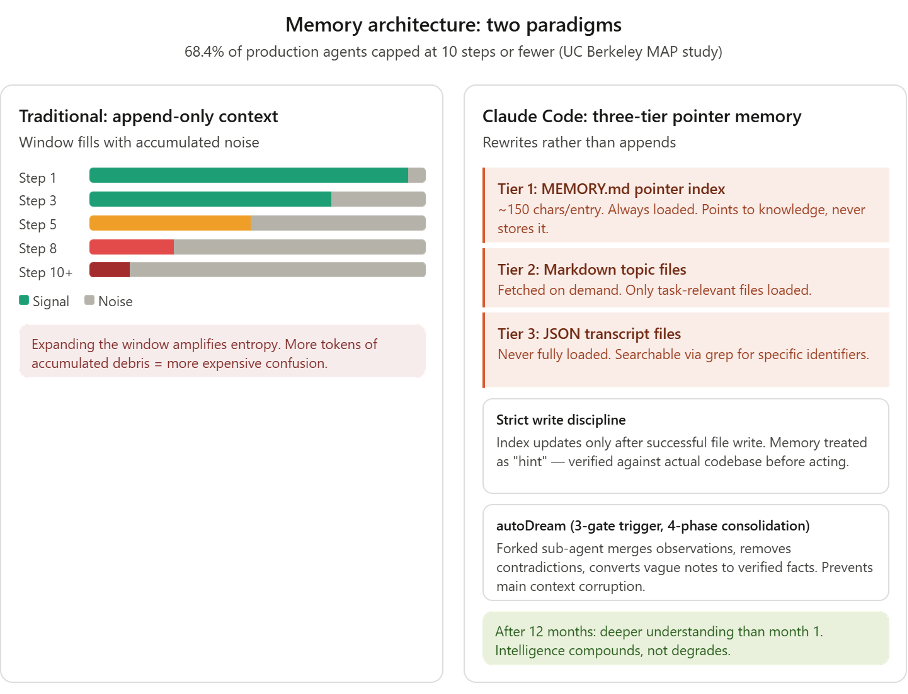

The first is the pointer-based memory system. Every AI agent that runs long enough encounters context entropy. This is the degradation that occurs as sessions accumulate noise, leading the model to contradict itself and lose coherence. The UC Berkeley MAP study found that 68.4% of production agents are capped at ten steps or fewer because of this. Expanding context windows does not solve entropy. It amplifies it. More tokens of accumulated debris mean more expensive confusion.

Claude Code’s memory attacks this directly. It maintains a lightweight pointer index (MEMORY.md) with roughly 150 characters per entry, loaded into every session.

Each entry points to where detailed knowledge lives rather than storing it. The window stays clean. Above this, five compaction strategies continuously prune working memory. And above both sits autoDream: a consolidation system that runs during idle time, spawning a dedicated sub-agent to gather signals from recent sessions, merge new understanding with existing knowledge, resolve contradictions, and rebuild the index to reflect current understanding rather than historical accumulation.

The system does not append. It rewrites. After twelve months on a project, the agent understands it better than after one month because it has consolidated, pruned, and restructured what it knows.

The second is KAIROS - a proactive autonomous mode. Referenced over 150 times in the source but not yet shipped, KAIROS receives periodic tick prompts and independently decides whether to act. It persists across sessions. It maintains append-only logs that cannot be erased. It has exclusive tools: push notifications, file delivery, and repository monitoring. This is the transition from reactive to proactive, from an agent that waits for instructions to one that monitors, evaluates, and engages at the opportune moment (kairos, in Greek, meaning the right time to act).

The third is the operational ceiling of the multi-agent system. The harness’s three delegation models and hook-driven policy enforcement run with effectively unlimited iterations. ULTRAPLAN offloads planning to a remote runtime with a 30-minute horizon. Multi-agent orchestration at this scale is built on infrastructure behind feature flags. This is the capacity for the orchestration graph to handle workflows of arbitrary complexity.

The convergence across the industry is striking.

OpenAI’s Codex CLI uses a structurally parallel two-phase memory pipeline: it extracts memories from sessions and spawns a consolidation sub-agent to update MEMORY.md. Google’s Gemini CLI has added an experimental memory manager agent. Every major lab has converged on pointer-based persistent memory with consolidation. This is an emerging industry standard.

The leak reveals that Anthropic is ahead. Again.

autoDream’s four-phase self-correcting consolidation is more sophisticated than Codex’s two-phase pipeline. The forked sub-agent that prevents memory maintenance from corrupting reasoning has no equivalent elsewhere. KAIROS has no counterpart in any competitor’s codebase. The other labs are building memory. Anthropic is building institutional memory that rewrites itself, enforces its own policies, and decides when to engage.

What this does to domain expertise is the consequence that connects the engineering to the economy.

For twenty years, the strongest defense in enterprise software has been: we possess a deep, irreplaceable understanding of how work gets done in this domain. But domain expertise is not one thing. It is two.

Product-level expertise stays with the incumbent. This includes regulatory logic, compliance rules, and exception handling baked into the software across thousands of customers and decades of operation. An insurance platform like Guidewire embeds how claims processing should work across jurisdictions, with rules that carry legal consequences. Where expertise carries legal weight, the incumbent strengthens. Nothing in the leaked architecture changes this.

Operational expertise is more vulnerable, although the distinction is subtle. A meaningful share of operational knowledge already lives inside the incumbent’s product as configuration: the approval chains in ServiceNow, the forecast methodologies in Salesforce, the escalation rules in Zendesk. When a company spends eighteen months configuring its CRM with specific pipeline stages and regional sign-off requirements, that is operational judgment codified into software. The orchestrator cannot simply read it out.

What the orchestrator can do, over sustained deployment, is learn the operational patterns that sit between systems and above configurations.

This is the judgment call that no single application captures because they span multiple tools, rely on informal norms, and evolve faster than anyone updates the settings. The pointer memory indexes how the team actually operates. autoDream consolidates those patterns across sessions. The architecture is built for this kind of accumulation, even if the evidence so far comes from software engineering rather than the broader enterprise domains where the harness has not yet been deployed.

Systems of record store transactions and codified rules. The orchestrator is designed to capture the evolving judgment that connects them. That is what makes the transition from tool to colleague structurally possible. It is the first domain where the orchestrator already operates, and eventually, wherever the harness extends.

From Orchestration to Colleagues

The partial erosion of the domain expertise moat is not the end of the argument. It is the foundation for the most consequential implication of the leaked architecture. And it is the connection that ties together two threads I have been developing separately for the past year: orchestration and “synthetic colleagues.”

An orchestrator that has accumulated twelve months of operational intelligence is not merely a competitive threat to the software vendor whose domain expertise it has absorbed. It is an agent that has become qualified to do the team’s work. The operational understanding that erodes the incumbent’s moat is the same operational understanding that enables autonomous function. Domain expertise was the barrier to autonomous operation. The leaked architecture is dissolving it from within.

This is the causal chain the leak makes visible for the first time. The pointer memory that solves context entropy enables sustained operation. Sustained operation produces compounding operational intelligence. That intelligence erodes the domain expertise moat.

And the eroded moat, the accumulated understanding of how work actually gets done, is precisely what qualifies the agent to perform the work autonomously. Each step depends on the one before it. The architecture that makes orchestration durable is the architecture that enables autonomous colleagues.

They are not two products. They are two consequences of the same engineering.

Applying the Three Laws of Agentic Era Value

Last year, I described a coming transition from applications that enhance productivity to applications that replace organizational functions, becoming synthetic colleagues. The evidence at the time was inferential.

The Claude Code leak provides great production evidence of this dynamic. The leaked code is the integrated system: tools for diverse domains, policy enforcement, memory for continuity, and sub-agent delegation for parallel execution. The architectural foundation for domain expertise accumulation and the architectural foundation for autonomous colleagues are one and the same.

KAIROS is the infrastructure for the final step in the chain.

An agent that decides when to monitor, evaluate, and act without being asked is not assisting the knowledge worker. It is performing the function of a knowledge worker. The proactive daemon, built but not yet shipped, represents the phase transition from bounded assistance to autonomous operation. KAIROS is built for a world where the agent takes initiative because it has accumulated sufficient operational understanding to know what to do.

The blast radius of this transition extends across every company the orchestration graph touches. It extends across the Three Laws of Value in the Agentic Era that I defined:

Intent capture migrates. The most valuable position in the workflow is the point where the goal is expressed. When a user tells an agent, “Process this claim,” rather than opening an application, that position shifts downstream. Can the system of record become the system of action, the layer that receives intent? The orchestration graph shows that intent flows to the center. The synthetic colleague captures it directly. The application becomes the node the colleague calls, not the surface the user opens.

Context depth is partially eroded. The data defensibility dimension remains intact thanks to regulatory barriers, network effects, and integration embeddedness. An enterprise cannot rebuild its customer data graph or HR lifecycle records in a matter of weeks. But the operational dimension migrates. The orchestrator builds its own context activation layer over time, independent of the incumbent’s data.

Workflow control is absorbed. Of the Three Laws, this is where the leaked architecture has the most immediate impact. The orchestrator does not ship a competing project management tool. It makes the dedicated tool unnecessary by generating coordination metadata as a by-product of doing the work. When an agent processes a task end-to-end, it inherently tracks what was done, in what sequence, and what remains. The workflow layer emerges from execution rather than existing as a separate surface that the user must maintain. The model provider at the center of the graph ascends across all three dimensions simultaneously.

The consequence is that the market does not sort by sector. It sorts by where each platform sits relative to the orchestrator.

Platforms whose expertise carries the throughput nodes, such as legal or regulatory consequences, are strengthened. The orchestrator must route through them. Guidewire does not lose relevance when the orchestration graph expands, nor does Veeva Systems; it becomes a node the graph cannot bypass. The position is durable precisely because the expertise is non-discretionary. This does not mean these companies are not a risk in the Agentic Era; it just means that their context moat provides real protection from LLM displacement of value.

Platforms whose value is coordination metadata - the convenience layers - compress. The orchestrator generates task tracking, team coordination, and workflow status as a by-product of doing the work. It does not need a separate surface for what it produces natively. The mechanism is already visible in many companies’ earnings data.

Platforms whose orchestration thesis rests on proximity to data face a more subtle reframing. The orchestrator fetches data on demand and retains the intelligence it extracts. Proximity to data is infrastructure. It is not the same thing as controlling what happens with it.

But the blast radius extends well beyond the software sector. Beneath this reshuffling is a more fundamental shift that redefines what agentic colleagues actually compete to capture.

Traditional software accounts for roughly 3 to 5% of enterprise revenue. A synthetic colleague that has accumulated enough operational expertise to replace an organizational function does not compete for IT spend. It competes for labor budgets across every function it can perform, including marketing, finance, operations, legal, customer service, etc.

The addressable market is not the software stack. It is the organizational chart. Google Cloud and BCG estimate an incremental $3 trillion in the application layer. That’s not reallocated IT budget, but new software revenue converted from labor spend across the enterprise.

Race for the center

The Claude Code leak settles one question and opens another.

The settled question is whether the architecture for agentic colleagues exists in production. It does.

The pointer memory that compounds rather than degrades, the consolidation system that rewrites itself during idle time, and the autonomous daemon that decides when to act form a compiled infrastructure behind feature flags, waiting to ship. The agent that emerges from this architecture does not merely execute instructions. It accumulates operational understanding, session by session, until it is qualified to perform the work autonomously. That is no longer a projection. It is engineering.

But engineering is not adoption.

The architecture has been proven in software engineering, a domain with unusually tight verification loops, structured outputs, and a workforce that actively wants to delegate to agents. The leap to insurance claims processing, legal compliance, or financial operations - where error costs are higher, regulatory scrutiny is heavier, and the humans in the loop are less eager to be replaced - remains an extrapolation from the codebase, not a conclusion demonstrated by it.

Enterprise procurement cycles are slow. Trust is earned in years, not sessions.

The open question is who benefits. The instinct is to assume this is a zero-sum race where the orchestrator captures everything. The architecture says otherwise. The orchestrator sits at the center but calls outward to systems of record, vertical tools, and domain-specific agents. Every call is volume.

An HCM platform that becomes a reliable node in the orchestration graph processes more workflows, not fewer. A domain-specific agent provider that builds specialized colleagues on the same architectural patterns—pointer memory, consolidation, policy enforcement—captures the labor budget in domains that the general-purpose harness has not yet reached, and may never reach with sufficient depth.

The graph expands the market for everyone it touches. The value is distributed by position.

What does not survive is the middle: the platforms that are neither deep enough to be essential nodes nor sophisticated enough to be orchestrators or specialist agent providers in their own right. The convenience layer, the coordination surface, the tool whose value is metadata, and the harness are generated natively. That is where compression is real and already measurable.

The selloff has been broad. The sorting should be smarter and more precise.

This is not a market reset. It is a re-indexing of value around the center of the orchestration graph.

The closer you are to that center, the more of the future you capture.